GitHub | 中文文档 | English Docs | Paper | Website

15-minute local setup · One repo per quest · Visible research progress · Human takeover anytime

Quick Start • Launch Your First Project • Product Tour • Model Setup

Unlike one-shot AI Scientist or autoresearch-style systems, DeepScientist is a local-first autonomous research studio that keeps the full loop moving on your machine, from baselines and experiment rounds to paper-ready outputs, with a 10-minute setup. Powered by Findings Memory, Bayesian optimization, and the Research Map, it keeps turning each new result into the next starting point and goes deep through broader exploration and, when needed, thousands of experiment validations.

If you want the technical deep dive behind DeepScientist, watch the Video.

deepscientist.mp4

What drains researchers is often not the lack of ideas. It is the endless cycle of low-leverage work:

- new papers keep coming, but only a small fraction turns into an actionable next-step research plan

- baseline repos fail on environment, dependency, data, and script issues before real work even starts

- experiment results get scattered across terminals, scripts, notes, and chats, making later review painful

- writing, figures, and analysis live in separate tools, so turning them into a coherent paper takes far too long

This is the problem DeepScientist is built to solve:

turn fragmented, repetitive, easy-to-lose research work into a local AI workspace that can keep moving, keep accumulating, and keep getting stronger over time

It is not a tool that summarizes papers, throws you a few ideas, and leaves the dirty work to you.

It is much closer to a real long-running AI research partner:

| What common AI tools often look like | What DeepScientist does instead |

|---|---|

| Great at chatting, but context disappears quickly | Turns tasks, files, branches, artifacts, and memory into durable state |

| Good at suggesting ideas, but weak at sustained execution | Pushes papers, baselines, experiments, and writing inside one workspace |

| Strong automation, but feels like a black box | Lets you inspect the process through the web workspace, Canvas, files, and terminal |

| Hard to take over once it goes off track | Lets you pause, take over, edit plans, change code, and continue at any time |

| Each run ends when the run ends | Preserves failed paths, winning paths, and reproduction lessons for the next round |

DeepScientist is not a one-shot agent demo. It is a system built for long-horizon research work.

- feed it a core paper, a GitHub repository, or a natural-language research objective

- it turns those inputs into an executable quest instead of a chat that loses state after a few turns

- restore repositories, prepare environments, handle dependencies, and track the critical failures

- preserve what broke, what got fixed, and which steps are trustworthy for future rounds

- propose the next hypothesis from existing results

- branch, ablate, compare, and record conclusions

- keep failed routes as assets instead of deleting them

- organize findings, conclusions, and analysis

- produce figures, reports, and paper drafts

- support local PDF and LaTeX compilation workflows

- the web workspace in your browser

- the TUI workflow on a remote server

- external connector surfaces for collaboration and progress updates

The current docs already cover these collaboration channels:

What retains users is not a flashy demo. It is a system that becomes more useful the longer you work with it.

DeepScientist tends to stick for four reasons:

- code, experiments, drafts, and project state stay on your own machine or server by default

- this is especially valuable for unpublished ideas, sensitive experiment history, and longer-running research loops

- every quest is a real Git repository

- branches, worktrees, files, and artifacts naturally express research structure

- it does not only give you an output

- you can inspect what it read, what it changed, what it kept, and what it plans to do next

- DeepScientist can move autonomously

- you can also step in, edit, redirect, and hand control back whenever you want

Because this is not just a concept. It is a real system with public docs, a public paper, and a public install path.

2026/03/24: DeepScientist officially releasedv1.52026/02/01: the paper went live on OpenReview forICLR 2026- npm install path is already available:

@researai/deepscientist - both Chinese and English docs are available, along with Web, TUI, and connector entry points

- graduate students and engineers who want to reproduce papers and push beyond existing baselines

- labs or research teams running long experiment loops, ablations, and structured result analysis

- people who want code, experiments, notes, and writing to live in one workspace

- users who do not want to hand unpublished ideas and intermediate results directly to a pure cloud workflow

- people who want to run work on servers while following progress from web, TUI, or messaging surfaces

We believe a system that is actually suitable for research should at least satisfy these principles:

- one quest, one repository, instead of letting everything dissolve after a short conversation

- branches and worktrees should express research routes naturally instead of being forced into chat history

- failed paths should be preserved, summarized, and reused instead of overwritten

- human researchers should always retain takeover power instead of being locked outside the loop

- the research process should be reviewable, inspectable, and auditable instead of relying on "the model says it did it"

If that sounds like the way you want to work, DeepScientist is worth trying now.

If you want to try it right now, the shortest path is:

Platform note: DeepScientist fully supports Linux and macOS. Native Windows support is currently experimental (strongly recommend WSL2).

npm install -g @researai/deepscientist

codex login

ds --hereTo stop the managed local daemon and all currently running agents:

ds --stopIf you prefer the interactive first-run flow, run this once first:

codexIf codex still appears to be missing after installing DeepScientist, take the explicit repair path instead of assuming the bundled dependency was linked correctly:

npm install -g @openai/codex

which codex

codex loginIf which codex still prints nothing after that, fix the npm global bin path first, then retry codex login and ds doctor.

After startup, the default local address is:

http://127.0.0.1:20999

Local browser auth is now optional and disabled by default. If you want a per-launch local access password, start with:

ds --auth trueThen you only need to do three things:

- click

Start Research - fill in the research goal, baseline links, paper links, or local paths

- let DeepScientist start a real research project that can keep evolving locally

If this is your first run, prefer an isolated environment, a non-root user, and a local machine. For the full details, see:

- 15 Codex Provider Setup

- 21 Local Model Backends Guide

- Weixin Connector Guide

- QQ Connector Guide

- Telegram Connector Guide

- WhatsApp Connector Guide

- Feishu Connector Guide

| System | System Type | E2E | Research Map | Workshop | Keeps Growing | Channels | Figure & Rebuttal & Review |

|---|---|---|---|---|---|---|---|

| autoresearch | Open-source | ✓ | |||||

| RD-Agent | Open-source | ✓ | |||||

| Agent Laboratory | Open-source | ✓ | ✓ | ✓ | |||

| AI-Scientist | Open-source | ✓ | |||||

| AI-Scientist-v2 | Open-source | ✓ | |||||

| AutoResearchClaw | Open-source | ✓ | ✓ | ✓ | |||

| ClawPhD | Open-source | ✓ | ✓ | ||||

| Dr. Claw | Open-source | ✓ | ✓ | ✓ | |||

| FARS | Closed-source | ✓ | |||||

| EvoScientist | Open-source | ✓ | ✓ | ✓ | ✓ | ||

| ScienceClaw | Open-source | ✓ | ✓ | ||||

| claude-scholar | Open-source | ✓ | ✓ | ✓ | |||

| Research-Claw | Open-source | ✓ | ✓ | ✓ | ✓ | ||

| DeepScientist | Open-source | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

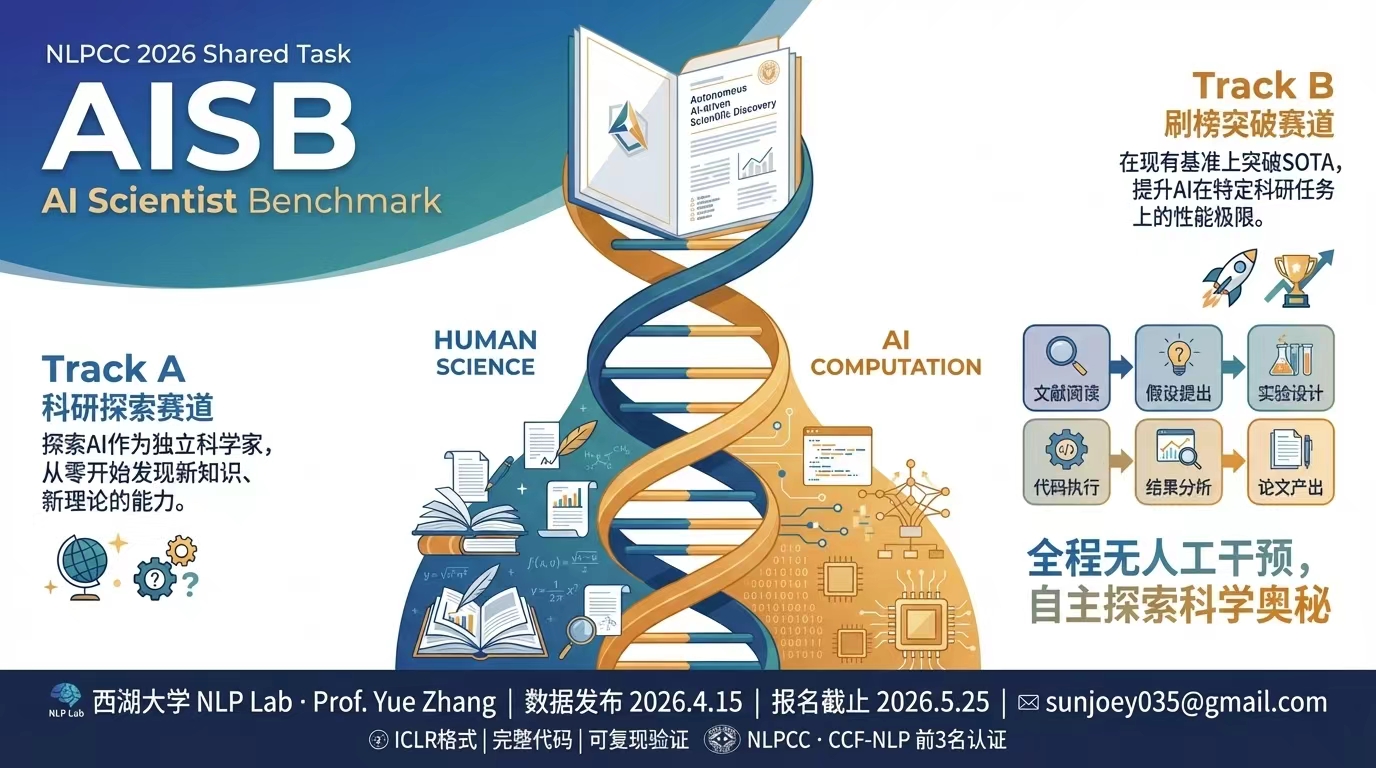

If you want to benchmark or extend AI scientist systems in the wild, the NLPCC 2026 AISB shared task is a natural next stop:

If you are developing or maintaining DeepScientist, continue with:

If DeepScientist helps your research or engineering work, please cite the paper below. DeepScientist is jointly developed by Yixuan Weng, Weixu Zhao, Shichen Li, Zhen Lin, and Minjun Zhu.

@inproceedings{

weng2026deepscientist,

title={DeepScientist: Advancing Frontier-Pushing Scientific Findings Progressively},

author={Yixuan Weng and Minjun Zhu and Qiujie Xie and QiYao Sun and Zhen Lin and Sifan Liu and Yue Zhang},

booktitle={The Fourteenth International Conference on Learning Representations},

year={2026},

url={https://openreview.net/forum?id=cZFgsLq8Gs}

}If this feels like the research workflow you have been waiting for, give the project a star. Every star makes it easier for more researchers who actually need it to find it.

Welcome to join the WeChat group for discussion.

If you like DeepScientist, you may also want to explore the rest of the ResearAI ecosystem:

| Project | What it does | Stars |

|---|---|---|

| MeOS | Fork yourself as a Skill, so agents understand you better | |

| AutoFigure | generate publication-ready figures | |

| AutoFigure-Edit | generate editable vector paper figures | |

| DeepReviewer-v2 | review papers and suggest revisions | |

| Awesome-AI-Scientist | curated AI scientist landscape |

We are building DeepScientist as a long-term local-first research operating system.

The next major upgrades focus on four directions:

- AI Scientist Benchmark support for more realistic evaluation and comparison

- smoother automatic baseline upload, download, and reuse

- stronger experiment replay, comparison, and paper-facing outputs

- stronger Memory and Findings Memory mechanisms

- better cross-run and cross-quest reuse

- less repeated failure and less rediscovery cost over long projects

- VideoAnything-style multimodal research capabilities

- better local-model, connector, and copilot/autonomous collaboration flows

- a more efficient and more reliable DeepScientist system across local, collaborative, and long-horizon research settings

- safer local-first and server-side deployment defaults

- stronger auth, permission, and connector-surface protection

- less fabrication, lower hallucination, and more verification-grounded outputs

- better auditability for long-running autonomous research workflows

If this direction is interesting to you, please give the project a Watch and a Star:

This project is maintained by WestlakeNLP. If you run into problems, please ask on DeepWiki first; if it still cannot be resolved, open an issue.

WestlakeNLP is led by ACL Fellow Professor Yue Zhang. If you are interested in a long-term internship, PhD position, or research assistant opportunity, contact Professor Yue Zhang at zhangyue@westlake.edu.cn.