diff --git a/.github/CONTRIBUTING.md b/.github/CONTRIBUTING.md

new file mode 100644

index 0000000000..e1a953dc97

--- /dev/null

+++ b/.github/CONTRIBUTING.md

@@ -0,0 +1,235 @@

+# Contributing

+

+Thank you for being interested in contributing to HTTPX.

+There are many ways you can contribute to the project:

+

+- Try HTTPX and [report bugs/issues you find](https://github.com/encode/httpx/issues/new)

+- [Implement new features](https://github.com/encode/httpx/issues?q=is%3Aissue+is%3Aopen+label%3A%22good+first+issue%22)

+- [Review Pull Requests of others](https://github.com/encode/httpx/pulls)

+- Write documentation

+- Participate in discussions

+

+## Reporting Bugs or Other Issues

+

+Found something that HTTPX should support?

+Stumbled upon some unexpected behaviour?

+

+Contributions should generally start out with [a discussion](https://github.com/encode/httpx/discussions).

+Possible bugs may be raised as a "Potential Issue" discussion, feature requests may

+be raised as an "Ideas" discussion. We can then determine if the discussion needs

+to be escalated into an "Issue" or not, or if we'd consider a pull request.

+

+Try to be more descriptive as you can and in case of a bug report,

+provide as much information as possible like:

+

+- OS platform

+- Python version

+- Installed dependencies and versions (`python -m pip freeze`)

+- Code snippet

+- Error traceback

+

+You should always try to reduce any examples to the *simplest possible case*

+that demonstrates the issue.

+

+Some possibly useful tips for narrowing down potential issues...

+

+- Does the issue exist on HTTP/1.1, or HTTP/2, or both?

+- Does the issue exist with `Client`, `AsyncClient`, or both?

+- When using `AsyncClient` does the issue exist when using `asyncio` or `trio`, or both?

+

+## Development

+

+To start developing HTTPX create a **fork** of the

+[HTTPX repository](https://github.com/encode/httpx) on GitHub.

+

+Then clone your fork with the following command replacing `YOUR-USERNAME` with

+your GitHub username:

+

+```shell

+$ git clone https://github.com/YOUR-USERNAME/httpx

+```

+

+You can now install the project and its dependencies using:

+

+```shell

+$ cd httpx

+$ scripts/install

+```

+

+## Testing and Linting

+

+We use custom shell scripts to automate testing, linting,

+and documentation building workflow.

+

+To run the tests, use:

+

+```shell

+$ scripts/test

+```

+

+!!! warning

+ The test suite spawns testing servers on ports **8000** and **8001**.

+ Make sure these are not in use, so the tests can run properly.

+

+You can run a single test script like this:

+

+```shell

+$ scripts/test -- tests/test_multipart.py

+```

+

+To run the code auto-formatting:

+

+```shell

+$ scripts/lint

+```

+

+Lastly, to run code checks separately (they are also run as part of `scripts/test`), run:

+

+```shell

+$ scripts/check

+```

+

+## Documenting

+

+Documentation pages are located under the `docs/` folder.

+

+To run the documentation site locally (useful for previewing changes), use:

+

+```shell

+$ scripts/docs

+```

+

+## Resolving Build / CI Failures

+

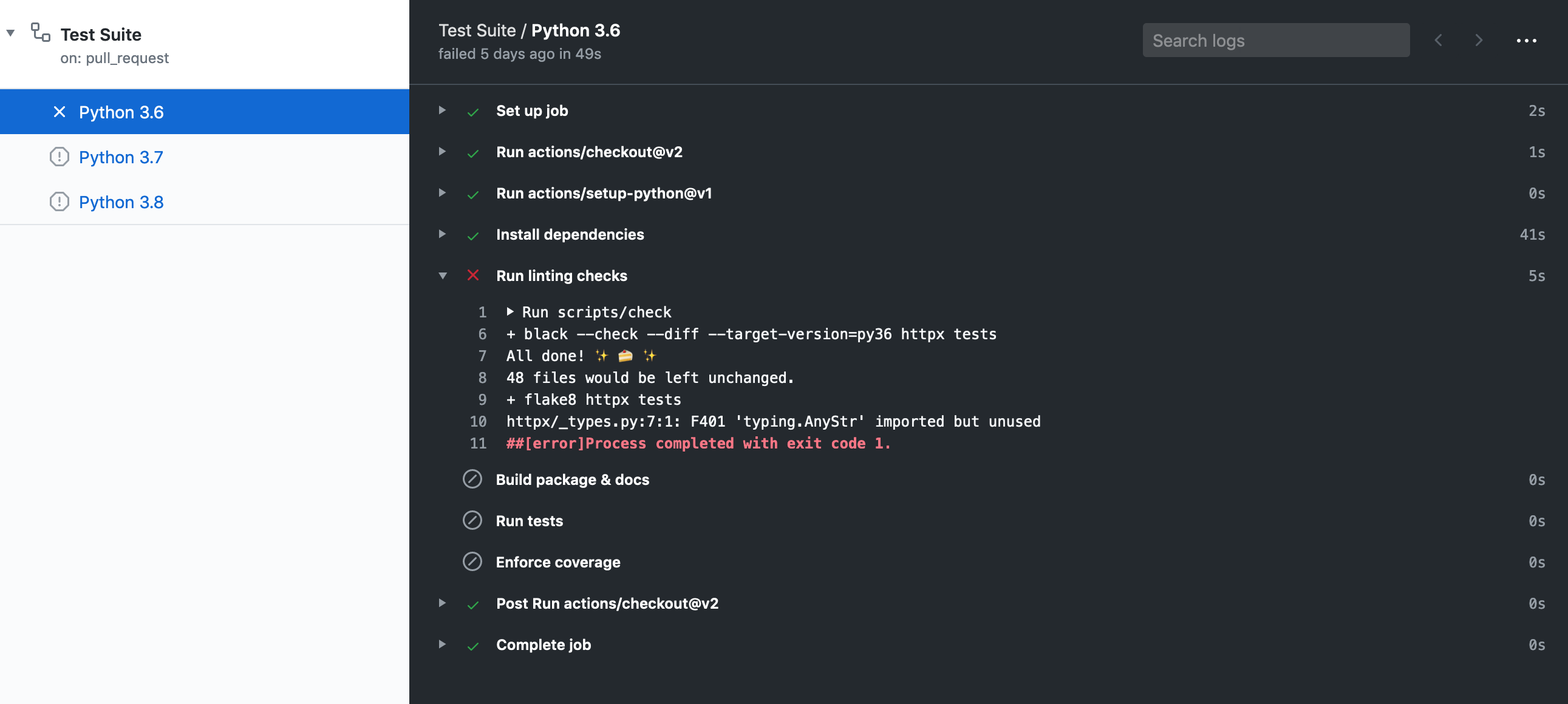

+Once you've submitted your pull request, the test suite will automatically run, and the results will show up in GitHub.

+If the test suite fails, you'll want to click through to the "Details" link, and try to identify why the test suite failed.

+

+

+  +

+

+

+Here are some common ways the test suite can fail:

+

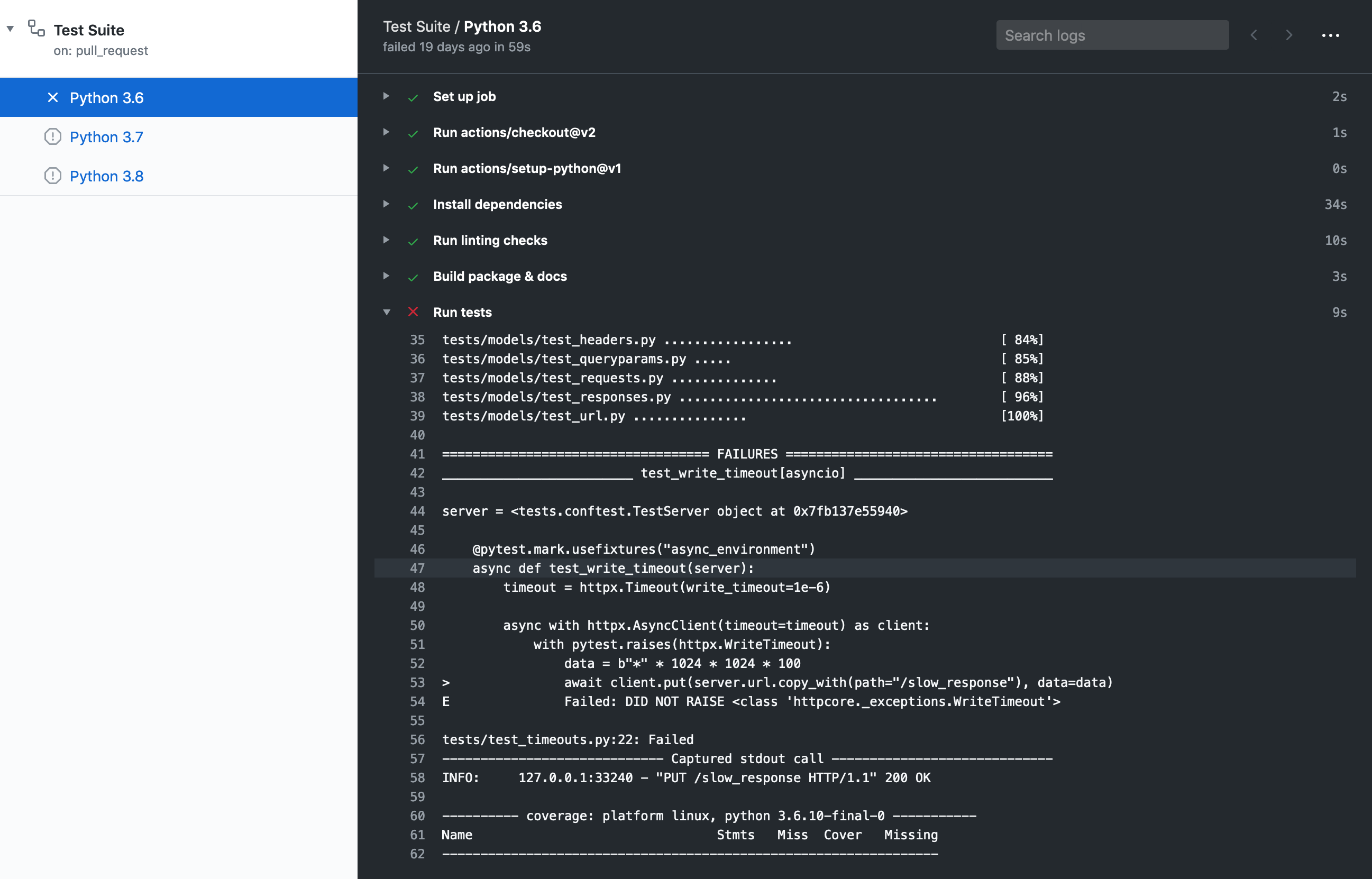

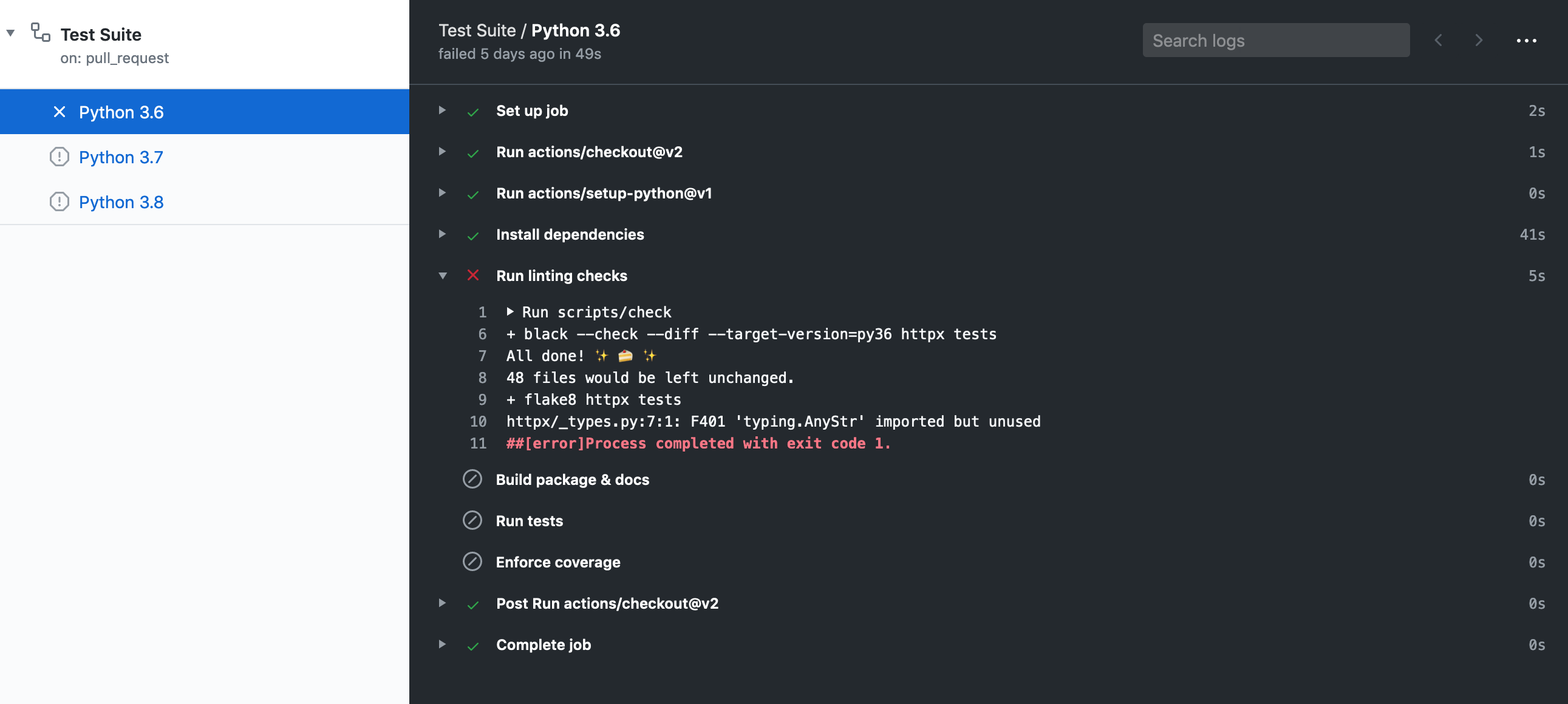

+### Check Job Failed

+

+

+  +

+

+

+This job failing means there is either a code formatting issue or type-annotation issue.

+You can look at the job output to figure out why it's failed or within a shell run:

+

+```shell

+$ scripts/check

+```

+

+It may be worth it to run `$ scripts/lint` to attempt auto-formatting the code

+and if that job succeeds commit the changes.

+

+### Docs Job Failed

+

+This job failing means the documentation failed to build. This can happen for

+a variety of reasons like invalid markdown or missing configuration within `mkdocs.yml`.

+

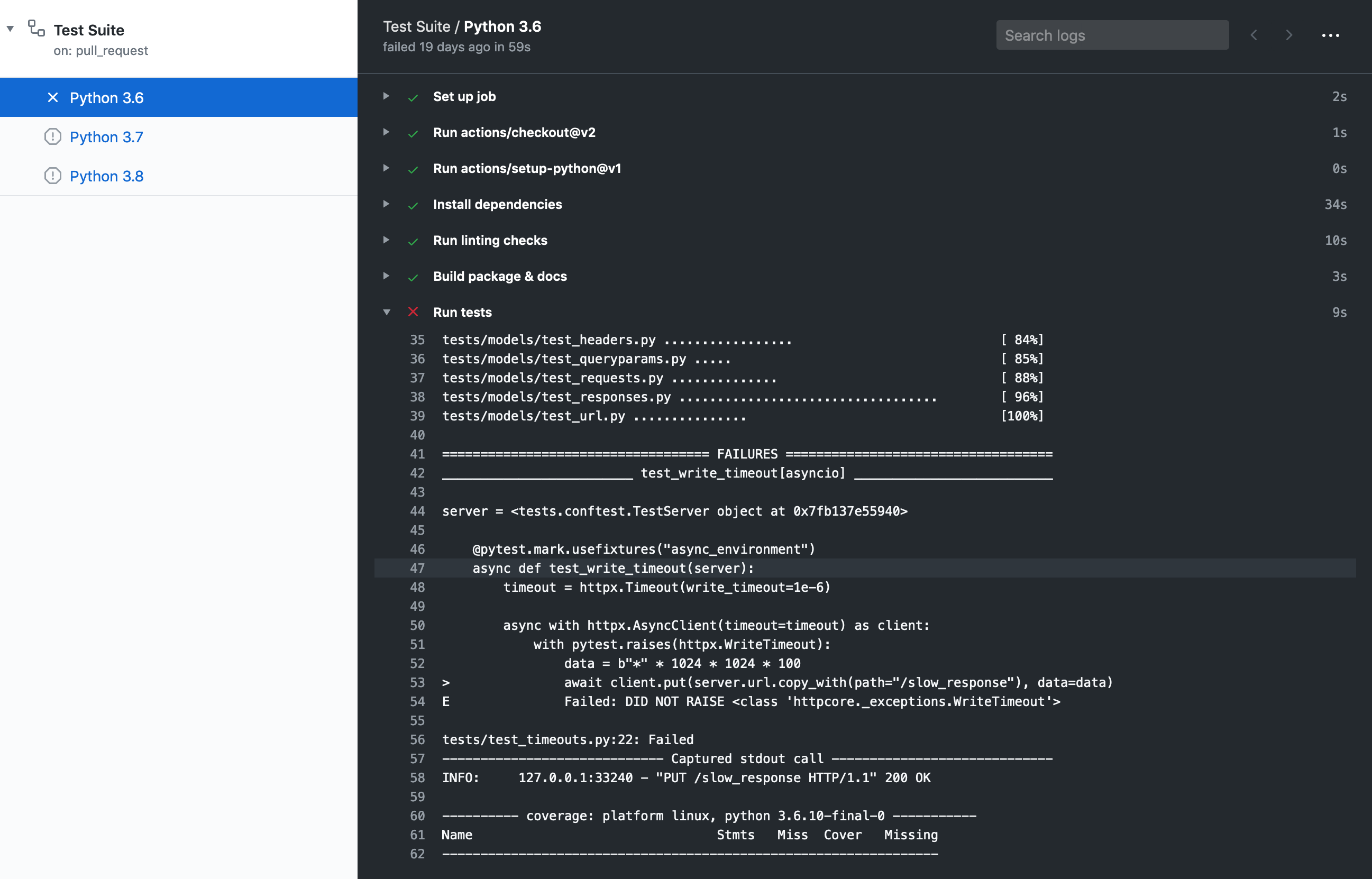

+### Python 3.X Job Failed

+

+

+  +

+

+

+This job failing means the unit tests failed or not all code paths are covered by unit tests.

+

+If tests are failing you will see this message under the coverage report:

+

+`=== 1 failed, 435 passed, 1 skipped, 1 xfailed in 11.09s ===`

+

+If tests succeed but coverage doesn't reach our current threshold, you will see this

+message under the coverage report:

+

+`FAIL Required test coverage of 100% not reached. Total coverage: 99.00%`

+

+## Releasing

+

+*This section is targeted at HTTPX maintainers.*

+

+Before releasing a new version, create a pull request that includes:

+

+- **An update to the changelog**:

+ - We follow the format from [keepachangelog](https://keepachangelog.com/en/1.0.0/).

+ - [Compare](https://github.com/encode/httpx/compare/) `master` with the tag of the latest release, and list all entries that are of interest to our users:

+ - Things that **must** go in the changelog: added, changed, deprecated or removed features, and bug fixes.

+ - Things that **should not** go in the changelog: changes to documentation, tests or tooling.

+ - Try sorting entries in descending order of impact / importance.

+ - Keep it concise and to-the-point. 🎯

+- **A version bump**: see `__version__.py`.

+

+For an example, see [#1006](https://github.com/encode/httpx/pull/1006).

+

+Once the release PR is merged, create a

+[new release](https://github.com/encode/httpx/releases/new) including:

+

+- Tag version like `0.13.3`.

+- Release title `Version 0.13.3`

+- Description copied from the changelog.

+

+Once created this release will be automatically uploaded to PyPI.

+

+If something goes wrong with the PyPI job the release can be published using the

+`scripts/publish` script.

+

+## Development proxy setup

+

+To test and debug requests via a proxy it's best to run a proxy server locally.

+Any server should do but HTTPCore's test suite uses

+[`mitmproxy`](https://mitmproxy.org/) which is written in Python, it's fully

+featured and has excellent UI and tools for introspection of requests.

+

+You can install `mitmproxy` using `pip install mitmproxy` or [several

+other ways](https://docs.mitmproxy.org/stable/overview-installation/).

+

+`mitmproxy` does require setting up local TLS certificates for HTTPS requests,

+as its main purpose is to allow developers to inspect requests that pass through

+it. We can set them up follows:

+

+1. [`pip install trustme-cli`](https://github.com/sethmlarson/trustme-cli/).

+2. `trustme-cli -i example.org www.example.org`, assuming you want to test

+connecting to that domain, this will create three files: `server.pem`,

+`server.key` and `client.pem`.

+3. `mitmproxy` requires a PEM file that includes the private key and the

+certificate so we need to concatenate them:

+`cat server.key server.pem > server.withkey.pem`.

+4. Start the proxy server `mitmproxy --certs server.withkey.pem`, or use the

+[other mitmproxy commands](https://docs.mitmproxy.org/stable/) with different

+UI options.

+

+At this point the server is ready to start serving requests, you'll need to

+configure HTTPX as described in the

+[proxy section](https://www.python-httpx.org/advanced/#http-proxying) and

+the [SSL certificates section](https://www.python-httpx.org/advanced/#ssl-certificates),

+this is where our previously generated `client.pem` comes in:

+

+```

+import httpx

+

+proxies = {"all": "http://127.0.0.1:8080/"}

+

+with httpx.Client(proxies=proxies, verify="/path/to/client.pem") as client:

+ response = client.get("https://example.org")

+ print(response.status_code) # should print 200

+```

+

+Note, however, that HTTPS requests will only succeed to the host specified

+in the SSL/TLS certificate we generated, HTTPS requests to other hosts will

+raise an error like:

+

+```

+ssl.SSLCertVerificationError: [SSL: CERTIFICATE_VERIFY_FAILED] certificate

+verify failed: Hostname mismatch, certificate is not valid for

+'duckduckgo.com'. (_ssl.c:1108)

+```

+

+If you want to make requests to more hosts you'll need to regenerate the

+certificates and include all the hosts you intend to connect to in the

+seconds step, i.e.

+

+`trustme-cli -i example.org www.example.org duckduckgo.com www.duckduckgo.com`

diff --git a/.github/FUNDING.yml b/.github/FUNDING.yml

new file mode 100644

index 0000000000..2f87d94ca1

--- /dev/null

+++ b/.github/FUNDING.yml

@@ -0,0 +1 @@

+github: encode

diff --git a/.github/ISSUE_TEMPLATE/1-issue.md b/.github/ISSUE_TEMPLATE/1-issue.md

new file mode 100644

index 0000000000..5c0f8af677

--- /dev/null

+++ b/.github/ISSUE_TEMPLATE/1-issue.md

@@ -0,0 +1,16 @@

+---

+name: Issue

+about: Please only raise an issue if you've been advised to do so after discussion. Thanks! 🙏

+---

+

+The starting point for issues should usually be a discussion...

+

+https://github.com/encode/httpx/discussions

+

+Possible bugs may be raised as a "Potential Issue" discussion, feature requests may be raised as an "Ideas" discussion. We can then determine if the discussion needs to be escalated into an "Issue" or not.

+

+This will help us ensure that the "Issues" list properly reflects ongoing or needed work on the project.

+

+---

+

+- [ ] Initially raised as discussion #...

diff --git a/.github/ISSUE_TEMPLATE/2-bug-report.md b/.github/ISSUE_TEMPLATE/2-bug-report.md

deleted file mode 100644

index a206030729..0000000000

--- a/.github/ISSUE_TEMPLATE/2-bug-report.md

+++ /dev/null

@@ -1,61 +0,0 @@

----

-name: Bug report

-about: Report a bug to help improve this project

----

-

-### Checklist

-

-

-

-- [ ] The bug is reproducible against the latest release and/or `master`.

-- [ ] There are no similar issues or pull requests to fix it yet.

-

-### Describe the bug

-

-

-

-### To reproduce

-

-

-

-### Expected behavior

-

-

-

-### Actual behavior

-

-

-

-### Debugging material

-

-

-

-### Environment

-

-- OS:

-- Python version:

-- HTTPX version:

-- Async environment:

-- HTTP proxy:

-- Custom certificates:

-

-### Additional context

-

-

diff --git a/.github/ISSUE_TEMPLATE/3-feature-request.md b/.github/ISSUE_TEMPLATE/3-feature-request.md

deleted file mode 100644

index a4237e2840..0000000000

--- a/.github/ISSUE_TEMPLATE/3-feature-request.md

+++ /dev/null

@@ -1,34 +0,0 @@

----

-name: Feature request

-about: Suggest an idea for this project.

----

-

-### Checklist

-

-

-

-- [ ] There are no similar issues or pull requests for this yet.

-- [ ] I discussed this idea on the [community chat](https://gitter.im/encode/community) and feedback is positive.

-

-### Is your feature related to a problem? Please describe.

-

-

-

-## Describe the solution you would like.

-

-

-

-## Describe alternatives you considered

-

-

-

-## Additional context

-

-

-

diff --git a/.github/ISSUE_TEMPLATE/config.yml b/.github/ISSUE_TEMPLATE/config.yml

index 2ad6e8e270..a491aa3502 100644

--- a/.github/ISSUE_TEMPLATE/config.yml

+++ b/.github/ISSUE_TEMPLATE/config.yml

@@ -1,7 +1,11 @@

# Ref: https://help.github.com/en/github/building-a-strong-community/configuring-issue-templates-for-your-repository#configuring-the-template-chooser

-blank_issues_enabled: true

+blank_issues_enabled: false

contact_links:

-- name: Question

+- name: Discussions

+ url: https://github.com/encode/httpx/discussions

+ about: >

+ The "Discussions" forum is where you want to start. 💖

+- name: Chat

url: https://gitter.im/encode/community

about: >

- Ask a question

+ Our community chat forum.

diff --git a/.github/PULL_REQUEST_TEMPLATE.md b/.github/PULL_REQUEST_TEMPLATE.md

new file mode 100644

index 0000000000..13b7dfe1da

--- /dev/null

+++ b/.github/PULL_REQUEST_TEMPLATE.md

@@ -0,0 +1,9 @@

+The starting point for contributions should usually be [a discussion](https://github.com/encode/httpx/discussions)

+

+Simple documentation typos may be raised as stand-alone pull requests, but otherwise

+please ensure you've discussed the your proposal prior to issuing a pull request.

+

+This will help us direct work appropriately, and ensure that any suggested changes

+have been okayed by the maintainers.

+

+- [ ] Initially raised as discussion #...

diff --git a/CHANGELOG.md b/CHANGELOG.md

index 992f4e4e38..46384c5963 100644

--- a/CHANGELOG.md

+++ b/CHANGELOG.md

@@ -4,14 +4,56 @@ All notable changes to this project will be documented in this file.

The format is based on [Keep a Changelog](https://keepachangelog.com/en/1.0.0/).

-## 0.17.1

+## 0.18.0 (27th April, 2021)

+

+The 0.18.x release series formalises our low-level Transport API, introducing the base classes `httpx.BaseTransport` and `httpx.AsyncBaseTransport`.

+

+See the "[Writing custom transports](https://www.python-httpx.org/advanced/#writing-custom-transports)" documentation and the [`httpx.BaseTransport.handle_request()`](https://github.com/encode/httpx/blob/397aad98fdc8b7580a5fc3e88f1578b4302c6382/httpx/_transports/base.py#L77-L147) docstring for more complete details on implementing custom transports.

+

+Pull request #1522 includes a checklist of differences from the previous `httpcore` transport API, for developers implementing custom transports.

+

+The following API changes have been issuing deprecation warnings since 0.17.0 onwards, and are now fully deprecated...

+

+* You should now use httpx.codes consistently instead of httpx.StatusCodes.

+* Use limits=... instead of pool_limits=....

+* Use proxies={"http://": ...} instead of proxies={"http": ...} for scheme-specific mounting.

+

+### Changed

+

+* Transport instances now inherit from `httpx.BaseTransport` or `httpx.AsyncBaseTransport`,

+ and should implement either the `handle_request` method or `handle_async_request` method. (Pull #1522, #1550)

+* The `response.ext` property and `Response(ext=...)` argument are now named `extensions`. (Pull #1522)

+* The recommendation to not use `data=` in favour of `content=` has now been escalated to a deprecation warning. (Pull #1573)

+* Drop `Response(on_close=...)` from API, since it was a bit of leaking implementation detail. (Pull #1572)

+* When using a client instance, cookies should always be set on the client, rather than on a per-request basis. We prefer enforcing a stricter API here because it provides clearer expectations around cookie persistence, particularly when redirects occur. (Pull #1574)

+* The runtime exception `httpx.ResponseClosed` is now named `httpx.StreamClosed`. (#1584)

+* The `httpx.QueryParams` model now presents an immutable interface. There is a discussion on [the design and motivation here](https://github.com/encode/httpx/discussions/1599). Use `client.params = client.params.merge(...)` instead of `client.params.update(...)`. The basic query manipulation methods are `query.set(...)`, `query.add(...)`, and `query.remove()`. (#1600)

+

+### Added

+

+* The `Request` and `Response` classes can now be serialized using pickle. (#1579)

+* Handle `data={"key": [None|int|float|bool]}` cases. (Pull #1539)

+* Support `httpx.URL(**kwargs)`, for example `httpx.URL(scheme="https", host="www.example.com", path="/')`, or `httpx.URL("https://www.example.com/", username="tom@gmail.com", password="123 456")`. (Pull #1601)

+* Support `url.copy_with(params=...)`. (Pull #1601)

+* Add `url.params` parameter, returning an immutable `QueryParams` instance. (Pull #1601)

+* Support query manipulation methods on the URL class. These are `url.copy_set_param()`, `url.copy_add_param()`, `url.copy_remove_param()`, `url.copy_merge_params()`. (Pull #1601)

+* The `httpx.URL` class now performs port normalization, so `:80` ports are stripped from `http` URLs and `:443` ports are stripped from `https` URLs. (Pull #1603)

+* The `URL.host` property returns unicode strings for internationalized domain names. The `URL.raw_host` property returns byte strings with IDNA escaping applied. (Pull #1590)

+

+### Fixed

+

+* Fix Content-Length for cases of `files=...` where unicode string is used as the file content. (Pull #1537)

+* Fix some cases of merging relative URLs against `Client(base_url=...)`. (Pull #1532)

+* The `request.content` attribute is now always available except for streaming content, which requires an explicit `.read()`. (Pull #1583)

+

+## 0.17.1 (March 15th, 2021)

### Fixed

* Type annotation on `CertTypes` allows `keyfile` and `password` to be optional. (Pull #1503)

* Fix httpcore pinned version. (Pull #1495)

-## 0.17.0

+## 0.17.0 (February 28th, 2021)

### Added

diff --git a/CONTRIBUTING.md b/CONTRIBUTING.md

deleted file mode 100644

index 73a8b3db48..0000000000

--- a/CONTRIBUTING.md

+++ /dev/null

@@ -1,5 +0,0 @@

-#### Thanks for considering contributing to HTTPX!

-

-Our [documentation on contributing to HTTPX](https://www.encode.io/httpx/contributing/)

-contains information on how to report bugs, write and test new features, and

-debug issues with your own changes.

diff --git a/LICENSE.md b/LICENSE.md

index 8963b9f219..ab79d16a3f 100644

--- a/LICENSE.md

+++ b/LICENSE.md

@@ -1,27 +1,12 @@

Copyright © 2019, [Encode OSS Ltd](https://www.encode.io/).

All rights reserved.

-Redistribution and use in source and binary forms, with or without

-modification, are permitted provided that the following conditions are met:

+Redistribution and use in source and binary forms, with or without modification, are permitted provided that the following conditions are met:

-* Redistributions of source code must retain the above copyright notice, this

- list of conditions and the following disclaimer.

+* Redistributions of source code must retain the above copyright notice, this list of conditions and the following disclaimer.

-* Redistributions in binary form must reproduce the above copyright notice,

- this list of conditions and the following disclaimer in the documentation

- and/or other materials provided with the distribution.

+* Redistributions in binary form must reproduce the above copyright notice, this list of conditions and the following disclaimer in the documentation and/or other materials provided with the distribution.

-* Neither the name of the copyright holder nor the names of its

- contributors may be used to endorse or promote products derived from

- this software without specific prior written permission.

+* Neither the name of the copyright holder nor the names of its contributors may be used to endorse or promote products derived from this software without specific prior written permission.

-THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS"

-AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE

-IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE

-DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT HOLDER OR CONTRIBUTORS BE LIABLE

-FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL

-DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR

-SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER

-CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY,

-OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

-OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

+THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT HOLDER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

diff --git a/README.md b/README.md

index 66b2f8688f..e85a0142c9 100644

--- a/README.md

+++ b/README.md

@@ -16,7 +16,7 @@

HTTPX is a fully featured HTTP client for Python 3, which provides sync and async APIs, and support for both HTTP/1.1 and HTTP/2.

**Note**: _HTTPX should be considered in beta. We believe we've got the public API to

-a stable point now, but would strongly recommend pinning your dependencies to the `0.17.*`

+a stable point now, but would strongly recommend pinning your dependencies to the `0.18.*`

release, so that you're able to properly review [API changes between package updates](https://github.com/encode/httpx/blob/master/CHANGELOG.md). A 1.0 release is expected to be issued sometime in 2021._

---

@@ -122,6 +122,7 @@ The HTTPX project relies on these excellent libraries:

* `rfc3986` - URL parsing & normalization.

* `idna` - Internationalized domain name support.

* `sniffio` - Async library autodetection.

+* `async_generator` - Backport support for `contextlib.asynccontextmanager`. *(Only required for Python 3.6)*

* `brotlipy` - Decoding for "brotli" compressed responses. *(Optional)*

A huge amount of credit is due to `requests` for the API layout that

diff --git a/docs/advanced.md b/docs/advanced.md

index 61bf4c1938..4438cb2d6f 100644

--- a/docs/advanced.md

+++ b/docs/advanced.md

@@ -667,7 +667,7 @@ You can control the connection pool size using the `limits` keyword

argument on the client. It takes instances of `httpx.Limits` which define:

- `max_keepalive`, number of allowable keep-alive connections, or `None` to always

-allow. (Defaults 10)

+allow. (Defaults 20)

- `max_connections`, maximum number of allowable connections, or` None` for no limits.

(Default 100)

@@ -945,6 +945,32 @@ client = httpx.Client(verify=False)

The `client.get(...)` method and other request methods *do not* support changing the SSL settings on a per-request basis. If you need different SSL settings in different cases you should use more that one client instance, with different settings on each. Each client will then be using an isolated connection pool with a specific fixed SSL configuration on all connections within that pool.

+### Client Side Certificates

+

+You can also specify a local cert to use as a client-side certificate, either a path to an SSL certificate file, or two-tuple of (certificate file, key file), or a three-tuple of (certificate file, key file, password)

+

+```python

+import httpx

+

+r = httpx.get("https://example.org", cert="path/to/client.pem")

+```

+

+Alternatively,

+

+```pycon

+>>> cert = ("path/to/client.pem", "path/to/client.key")

+>>> httpx.get("https://example.org", cert=cert)

+

+```

+

+or

+

+```pycon

+>>> cert = ("path/to/client.pem", "path/to/client.key", "password")

+>>> httpx.get("https://example.org", cert=cert)

+

+```

+

### Making HTTPS requests to a local server

When making requests to local servers, such as a development server running on `localhost`, you will typically be using unencrypted HTTP connections.

@@ -1015,31 +1041,39 @@ This [public gist](https://gist.github.com/florimondmanca/d56764d78d748eb9f73165

### Writing custom transports

-A transport instance must implement the Transport API defined by

-[`httpcore`](https://www.encode.io/httpcore/api/). You

-should either subclass `httpcore.AsyncHTTPTransport` to implement a transport to

-use with `AsyncClient`, or subclass `httpcore.SyncHTTPTransport` to implement a

-transport to use with `Client`.

+A transport instance must implement the low-level Transport API, which deals

+with sending a single request, and returning a response. You should either

+subclass `httpx.BaseTransport` to implement a transport to use with `Client`,

+or subclass `httpx.AsyncBaseTransport` to implement a transport to

+use with `AsyncClient`.

+

+At the layer of the transport API we're using plain primitives.

+No `Request` or `Response` models, no fancy `URL` or `Header` handling.

+This strict point of cut-off provides a clear design separation between the

+HTTPX API, and the low-level network handling.

+

+See the `handle_request` and `handle_async_request` docstrings for more details

+on the specifics of the Transport API.

A complete example of a custom transport implementation would be:

```python

import json

-import httpcore

+import httpx

-class HelloWorldTransport(httpcore.SyncHTTPTransport):

+class HelloWorldTransport(httpx.BaseTransport):

"""

A mock transport that always returns a JSON "Hello, world!" response.

"""

- def request(self, method, url, headers=None, stream=None, ext=None):

+ def handle_request(self, method, url, headers, stream, extensions):

message = {"text": "Hello, world!"}

content = json.dumps(message).encode("utf-8")

- stream = httpcore.PlainByteStream(content)

+ stream = httpx.ByteStream(content)

headers = [(b"content-type", b"application/json")]

- ext = {"http_version": b"HTTP/1.1"}

- return 200, headers, stream, ext

+ extensions = {}

+ return 200, headers, stream, extensions

```

Which we can use in the same way:

@@ -1084,24 +1118,23 @@ which transport an outgoing request should be routed via, with [the same style

used for specifying proxy routing](#routing).

```python

-import httpcore

import httpx

-class HTTPSRedirectTransport(httpcore.SyncHTTPTransport):

+class HTTPSRedirectTransport(httpx.BaseTransport):

"""

A transport that always redirects to HTTPS.

"""

- def request(self, method, url, headers=None, stream=None, ext=None):

+ def handle_request(self, method, url, headers, stream, extensions):

scheme, host, port, path = url

if port is None:

location = b"https://%s%s" % (host, path)

else:

location = b"https://%s:%d%s" % (host, port, path)

- stream = httpcore.PlainByteStream(b"")

+ stream = httpx.ByteStream(b"")

headers = [(b"location", location)]

- ext = {"http_version": b"HTTP/1.1"}

- return 303, headers, stream, ext

+ extensions = {}

+ return 303, headers, stream, extensions

# A client where any `http` requests are always redirected to `https`

diff --git a/docs/async.md b/docs/async.md

index 8ddee956ae..360be8feaa 100644

--- a/docs/async.md

+++ b/docs/async.md

@@ -237,3 +237,9 @@ async with httpx.AsyncClient(transport=transport, base_url="http://testserver")

```

See [the ASGI documentation](https://asgi.readthedocs.io/en/latest/specs/www.html#connection-scope) for more details on the `client` and `root_path` keys.

+

+## Startup/shutdown of ASGI apps

+

+It is not in the scope of HTTPX to trigger lifespan events of your app.

+

+However it is suggested to use `LifespanManager` from [asgi-lifespan](https://github.com/florimondmanca/asgi-lifespan#usage) in pair with `AsyncClient`.

diff --git a/docs/code_of_conduct.md b/docs/code_of_conduct.md

new file mode 100644

index 0000000000..1647289871

--- /dev/null

+++ b/docs/code_of_conduct.md

@@ -0,0 +1,56 @@

+# Code of Conduct

+

+We expect contributors to our projects and online spaces to follow [the Python Software Foundation’s Code of Conduct](https://www.python.org/psf/conduct/).

+

+The Python community is made up of members from around the globe with a diverse set of skills, personalities, and experiences. It is through these differences that our community experiences great successes and continued growth. When you're working with members of the community, this Code of Conduct will help steer your interactions and keep Python a positive, successful, and growing community.

+

+## Our Community

+

+Members of the Python community are **open, considerate, and respectful**. Behaviours that reinforce these values contribute to a positive environment, and include:

+

+* **Being open.** Members of the community are open to collaboration, whether it's on PEPs, patches, problems, or otherwise.

+* **Focusing on what is best for the community.** We're respectful of the processes set forth in the community, and we work within them.

+* **Acknowledging time and effort.** We're respectful of the volunteer efforts that permeate the Python community. We're thoughtful when addressing the efforts of others, keeping in mind that often times the labor was completed simply for the good of the community.

+* **Being respectful of differing viewpoints and experiences.** We're receptive to constructive comments and criticism, as the experiences and skill sets of other members contribute to the whole of our efforts.

+* **Showing empathy towards other community members.** We're attentive in our communications, whether in person or online, and we're tactful when approaching differing views.

+* **Being considerate.** Members of the community are considerate of their peers -- other Python users.

+* **Being respectful.** We're respectful of others, their positions, their skills, their commitments, and their efforts.

+* **Gracefully accepting constructive criticism.** When we disagree, we are courteous in raising our issues.

+* **Using welcoming and inclusive language.** We're accepting of all who wish to take part in our activities, fostering an environment where anyone can participate and everyone can make a difference.

+

+## Our Standards

+

+Every member of our community has the right to have their identity respected. The Python community is dedicated to providing a positive experience for everyone, regardless of age, gender identity and expression, sexual orientation, disability, physical appearance, body size, ethnicity, nationality, race, or religion (or lack thereof), education, or socio-economic status.

+

+## Inappropriate Behavior

+

+Examples of unacceptable behavior by participants include:

+

+* Harassment of any participants in any form

+* Deliberate intimidation, stalking, or following

+* Logging or taking screenshots of online activity for harassment purposes

+* Publishing others' private information, such as a physical or electronic address, without explicit permission

+* Violent threats or language directed against another person

+* Incitement of violence or harassment towards any individual, including encouraging a person to commit suicide or to engage in self-harm

+* Creating additional online accounts in order to harass another person or circumvent a ban

+* Sexual language and imagery in online communities or in any conference venue, including talks

+* Insults, put downs, or jokes that are based upon stereotypes, that are exclusionary, or that hold others up for ridicule

+* Excessive swearing

+* Unwelcome sexual attention or advances

+* Unwelcome physical contact, including simulated physical contact (eg, textual descriptions like "hug" or "backrub") without consent or after a request to stop

+* Pattern of inappropriate social contact, such as requesting/assuming inappropriate levels of intimacy with others

+* Sustained disruption of online community discussions, in-person presentations, or other in-person events

+* Continued one-on-one communication after requests to cease

+* Other conduct that is inappropriate for a professional audience including people of many different backgrounds

+

+Community members asked to stop any inappropriate behavior are expected to comply immediately.

+

+## Enforcement

+

+We take Code of Conduct violations seriously, and will act to ensure our spaces are welcoming, inclusive, and professional environments to communicate in.

+

+If you need to raise a Code of Conduct report, you may do so privately by email to tom@tomchristie.com.

+

+Reports will be treated confidentially.

+

+Alternately you may [make a report to the Python Software Foundation](https://www.python.org/psf/conduct/reporting/).

diff --git a/docs/compatibility.md b/docs/compatibility.md

index 99faf43232..7aed9dc1ed 100644

--- a/docs/compatibility.md

+++ b/docs/compatibility.md

@@ -28,8 +28,8 @@ And using `data=...` to send form data:

httpx.post(..., data={"message": "Hello, world"})

```

-If you're using a type checking tool such as `mypy`, you'll see warnings issues if using test/byte content with the `data` argument.

-However, for compatibility reasons with `requests`, we do still handle the case where `data=...` is used with raw binary and text contents.

+Using the `data=` will raise a deprecation warning,

+and is expected to be fully removed with the HTTPX 1.0 release.

## Content encoding

@@ -37,6 +37,26 @@ HTTPX uses `utf-8` for encoding `str` request bodies. For example, when using `c

For response bodies, assuming the server didn't send an explicit encoding then HTTPX will do its best to figure out an appropriate encoding. Unlike Requests which uses the `chardet` library, HTTPX relies on a plainer fallback strategy (basically attempting UTF-8, or using Windows-1252 as a fallback). This strategy should be robust enough to handle the vast majority of use cases.

+## Cookies

+

+If using a client instance, then cookies should always be set on the client rather than on a per-request basis.

+

+This usage is supported:

+

+```python

+client = httpx.Client(cookies=...)

+client.post(...)

+```

+

+This usage is **not** supported:

+

+```python

+client = httpx.Client()

+client.post(..., cookies=...)

+```

+

+We prefer enforcing a stricter API here because it provides clearer expectations around cookie persistence, particularly when redirects occur.

+

## Status Codes

In our documentation we prefer the uppercased versions, such as `codes.NOT_FOUND`, but also provide lower-cased versions for API compatibility with `requests`.

@@ -124,6 +144,11 @@ On the other hand, HTTPX uses [HTTPCore](https://github.com/encode/httpcore) as

`requests` omits `params` whose values are `None` (e.g. `requests.get(..., params={"foo": None})`). This is not supported by HTTPX.

+## HEAD redirection

+

+In `requests`, all top-level API follow redirects by default except `HEAD`.

+In consideration of consistency, we make `HEAD` follow redirects by default in HTTPX.

+

## Determining the next redirect request

When using `allow_redirects=False`, the `requests` library exposes an attribute `response.next`, which can be used to obtain the next redirect request.

@@ -142,6 +167,6 @@ while request is not None:

`requests` allows event hooks to mutate `Request` and `Response` objects. See [examples](https://requests.readthedocs.io/en/master/user/advanced/#event-hooks) given in the documentation for `requests`.

-In HTTPX, event hooks may access properties of requests and responses, but event hook callbacks cannot mutate the original request/response.

+In HTTPX, event hooks may access properties of requests and responses, but event hook callbacks cannot mutate the original request/response.

If you are looking for more control, consider checking out [Custom Transports](advanced.md#custom-transports).

diff --git a/docs/contributing.md b/docs/contributing.md

index 9732c81059..e1a953dc97 100644

--- a/docs/contributing.md

+++ b/docs/contributing.md

@@ -12,10 +12,13 @@ There are many ways you can contribute to the project:

## Reporting Bugs or Other Issues

Found something that HTTPX should support?

-Stumbled upon some unexpected behavior?

+Stumbled upon some unexpected behaviour?

+

+Contributions should generally start out with [a discussion](https://github.com/encode/httpx/discussions).

+Possible bugs may be raised as a "Potential Issue" discussion, feature requests may

+be raised as an "Ideas" discussion. We can then determine if the discussion needs

+to be escalated into an "Issue" or not, or if we'd consider a pull request.

-Feel free to open an issue at the

-[issue tracker](https://github.com/encode/httpx/issues).

Try to be more descriptive as you can and in case of a bug report,

provide as much information as possible like:

@@ -25,6 +28,15 @@ provide as much information as possible like:

- Code snippet

- Error traceback

+You should always try to reduce any examples to the *simplest possible case*

+that demonstrates the issue.

+

+Some possibly useful tips for narrowing down potential issues...

+

+- Does the issue exist on HTTP/1.1, or HTTP/2, or both?

+- Does the issue exist with `Client`, `AsyncClient`, or both?

+- When using `AsyncClient` does the issue exist when using `asyncio` or `trio`, or both?

+

## Development

To start developing HTTPX create a **fork** of the

diff --git a/docs/exceptions.md b/docs/exceptions.md

index 949ac47a19..3de8fc6b57 100644

--- a/docs/exceptions.md

+++ b/docs/exceptions.md

@@ -162,11 +162,11 @@ except httpx.HTTPStatusError as exc:

::: httpx.StreamConsumed

:docstring:

-::: httpx.ResponseNotRead

+::: httpx.StreamClosed

:docstring:

-::: httpx.RequestNotRead

+::: httpx.ResponseNotRead

:docstring:

-::: httpx.ResponseClosed

+::: httpx.RequestNotRead

:docstring:

diff --git a/docs/index.md b/docs/index.md

index 3da239f7e8..8a41dca3b1 100644

--- a/docs/index.md

+++ b/docs/index.md

@@ -27,7 +27,7 @@ HTTPX is a fully featured HTTP client for Python 3, which provides sync and asyn

!!! note

HTTPX should currently be considered in beta.

- We believe we've got the public API to a stable point now, but would strongly recommend pinning your dependencies to the `0.17.*` release, so that you're able to properly review [API changes between package updates](https://github.com/encode/httpx/blob/master/CHANGELOG.md).

+ We believe we've got the public API to a stable point now, but would strongly recommend pinning your dependencies to the `0.18.*` release, so that you're able to properly review [API changes between package updates](https://github.com/encode/httpx/blob/master/CHANGELOG.md).

A 1.0 release is expected to be issued sometime in 2021.

@@ -101,7 +101,7 @@ the [async support](async.md) section, or the [HTTP/2](http2.md) section.

The [Developer Interface](api.md) provides a comprehensive API reference.

-To find out about tools that integrate with HTTPX, see [Third Party Packages](third-party-packages.md).

+To find out about tools that integrate with HTTPX, see [Third Party Packages](third_party_packages.md).

## Dependencies

@@ -114,6 +114,7 @@ The HTTPX project relies on these excellent libraries:

* `rfc3986` - URL parsing & normalization.

* `idna` - Internationalized domain name support.

* `sniffio` - Async library autodetection.

+* `async_generator` - Backport support for `contextlib.asynccontextmanager`. *(Only required for Python 3.6)*

* `brotlipy` - Decoding for "brotli" compressed responses. *(Optional)*

A huge amount of credit is due to `requests` for the API layout that

diff --git a/docs/quickstart.md b/docs/quickstart.md

index 4f15549e71..4afaff2430 100644

--- a/docs/quickstart.md

+++ b/docs/quickstart.md

@@ -408,7 +408,8 @@ with additional API for accessing cookies by their domain or path.

## Redirection and History

-By default, HTTPX will follow redirects for anything except `HEAD` requests.

+By default, HTTPX will follow redirects for all HTTP methods.

+

The `history` property of the response can be used to inspect any followed redirects.

It contains a list of any redirect responses that were followed, in the order

@@ -436,16 +437,6 @@ You can modify the default redirection handling with the allow_redirects paramet

[]

```

-If you’re making a `HEAD` request, you can use this to enable redirection:

-

-```pycon

->>> r = httpx.head('http://github.com/', allow_redirects=True)

->>> r.url

-'https://github.com/'

->>> r.history

-[]

-```

-

## Timeouts

HTTPX defaults to including reasonable timeouts for all network operations,

diff --git a/docs/third-party-packages.md b/docs/third_party_packages.md

similarity index 78%

rename from docs/third-party-packages.md

rename to docs/third_party_packages.md

index 02a00e70a1..8c60b11d91 100644

--- a/docs/third-party-packages.md

+++ b/docs/third_party_packages.md

@@ -18,6 +18,18 @@ The ultimate Python library in building OAuth and OpenID Connect clients and ser

An asynchronous GitHub API library. Includes [HTTPX support](https://gidgethub.readthedocs.io/en/latest/httpx.html).

+### HTTPX-Auth

+

+[GitHub](https://github.com/Colin-b/httpx_auth) - [Documentation](https://colin-b.github.io/httpx_auth/)

+

+Provides authentication classes to be used with HTTPX [authentication parameter](advanced.md#customizing-authentication).

+

+### pytest-HTTPX

+

+[GitHub](https://github.com/Colin-b/pytest_httpx) - [Documentation](https://colin-b.github.io/pytest_httpx/)

+

+Provides `httpx_mock` [pytest](https://docs.pytest.org/en/latest/) fixture to mock HTTPX within test cases.

+

### RESPX

[GitHub](https://github.com/lundberg/respx) - [Documentation](https://lundberg.github.io/respx/)

diff --git a/httpx/__init__.py b/httpx/__init__.py

index 96d9e0c2f8..9a27790f4c 100644

--- a/httpx/__init__.py

+++ b/httpx/__init__.py

@@ -3,6 +3,7 @@

from ._auth import Auth, BasicAuth, DigestAuth

from ._client import AsyncClient, Client

from ._config import Limits, Proxy, Timeout, create_ssl_context

+from ._content import ByteStream

from ._exceptions import (

CloseError,

ConnectError,

@@ -22,8 +23,8 @@

RemoteProtocolError,

RequestError,

RequestNotRead,

- ResponseClosed,

ResponseNotRead,

+ StreamClosed,

StreamConsumed,

StreamError,

TimeoutException,

@@ -34,8 +35,14 @@

WriteTimeout,

)

from ._models import URL, Cookies, Headers, QueryParams, Request, Response

-from ._status_codes import StatusCode, codes

+from ._status_codes import codes

from ._transports.asgi import ASGITransport

+from ._transports.base import (

+ AsyncBaseTransport,

+ AsyncByteStream,

+ BaseTransport,

+ SyncByteStream,

+)

from ._transports.default import AsyncHTTPTransport, HTTPTransport

from ._transports.mock import MockTransport

from ._transports.wsgi import WSGITransport

@@ -45,10 +52,14 @@

"__title__",

"__version__",

"ASGITransport",

+ "AsyncBaseTransport",

+ "AsyncByteStream",

"AsyncClient",

"AsyncHTTPTransport",

"Auth",

+ "BaseTransport",

"BasicAuth",

+ "ByteStream",

"Client",

"CloseError",

"codes",

@@ -88,12 +99,12 @@

"RequestError",

"RequestNotRead",

"Response",

- "ResponseClosed",

"ResponseNotRead",

- "StatusCode",

"stream",

+ "StreamClosed",

"StreamConsumed",

"StreamError",

+ "SyncByteStream",

"Timeout",

"TimeoutException",

"TooManyRedirects",

diff --git a/httpx/__version__.py b/httpx/__version__.py

index 90fae6b2fb..b847686501 100644

--- a/httpx/__version__.py

+++ b/httpx/__version__.py

@@ -1,3 +1,3 @@

__title__ = "httpx"

__description__ = "A next generation HTTP client, for Python 3."

-__version__ = "0.17.1"

+__version__ = "0.18.0"

diff --git a/httpx/_api.py b/httpx/_api.py

index 8cfaf6dfda..ff40ce65e1 100644

--- a/httpx/_api.py

+++ b/httpx/_api.py

@@ -1,8 +1,9 @@

import typing

+from contextlib import contextmanager

-from ._client import Client, StreamContextManager

+from ._client import Client

from ._config import DEFAULT_TIMEOUT_CONFIG

-from ._models import Request, Response

+from ._models import Response

from ._types import (

AuthTypes,

CertTypes,

@@ -68,7 +69,8 @@ def request(

* **allow_redirects** - *(optional)* Enables or disables HTTP redirects.

* **verify** - *(optional)* SSL certificates (a.k.a CA bundle) used to

verify the identity of requested hosts. Either `True` (default CA bundle),

- a path to an SSL certificate file, or `False` (disable verification).

+ a path to an SSL certificate file, an `ssl.SSLContext`, or `False`

+ (which will disable verification).

* **cert** - *(optional)* An SSL certificate used by the requested host

to authenticate the client. Either a path to an SSL certificate file, or

two-tuple of (certificate file, key file), or a three-tuple of (certificate

@@ -88,7 +90,12 @@ def request(

```

"""

with Client(

- proxies=proxies, cert=cert, verify=verify, timeout=timeout, trust_env=trust_env

+ cookies=cookies,

+ proxies=proxies,

+ cert=cert,

+ verify=verify,

+ timeout=timeout,

+ trust_env=trust_env,

) as client:

return client.request(

method=method,

@@ -99,12 +106,12 @@ def request(

json=json,

params=params,

headers=headers,

- cookies=cookies,

auth=auth,

allow_redirects=allow_redirects,

)

+@contextmanager

def stream(

method: str,

url: URLTypes,

@@ -123,7 +130,7 @@ def stream(

verify: VerifyTypes = True,

cert: CertTypes = None,

trust_env: bool = True,

-) -> StreamContextManager:

+) -> typing.Iterator[Response]:

"""

Alternative to `httpx.request()` that streams the response body

instead of loading it into memory at once.

@@ -134,26 +141,22 @@ def stream(

[0]: /quickstart#streaming-responses

"""

- client = Client(proxies=proxies, cert=cert, verify=verify, trust_env=trust_env)

- request = Request(

- method=method,

- url=url,

- params=params,

- content=content,

- data=data,

- files=files,

- json=json,

- headers=headers,

- cookies=cookies,

- )

- return StreamContextManager(

- client=client,

- request=request,

- auth=auth,

- timeout=timeout,

- allow_redirects=allow_redirects,

- close_client=True,

- )

+ with Client(

+ cookies=cookies, proxies=proxies, cert=cert, verify=verify, trust_env=trust_env

+ ) as client:

+ with client.stream(

+ method=method,

+ url=url,

+ content=content,

+ data=data,

+ files=files,

+ json=json,

+ params=params,

+ headers=headers,

+ auth=auth,

+ allow_redirects=allow_redirects,

+ ) as response:

+ yield response

def get(

diff --git a/httpx/_client.py b/httpx/_client.py

index 3465a10b75..ae42e9eac6 100644

--- a/httpx/_client.py

+++ b/httpx/_client.py

@@ -2,12 +2,12 @@

import enum

import typing

import warnings

+from contextlib import contextmanager

from types import TracebackType

-import httpcore

-

from .__version__ import __version__

from ._auth import Auth, BasicAuth, FunctionAuth

+from ._compat import asynccontextmanager

from ._config import (

DEFAULT_LIMITS,

DEFAULT_MAX_REDIRECTS,

@@ -20,20 +20,24 @@

)

from ._decoders import SUPPORTED_DECODERS

from ._exceptions import (

- HTTPCORE_EXC_MAP,

InvalidURL,

RemoteProtocolError,

TooManyRedirects,

- map_exceptions,

+ request_context,

)

from ._models import URL, Cookies, Headers, QueryParams, Request, Response

from ._status_codes import codes

from ._transports.asgi import ASGITransport

+from ._transports.base import (

+ AsyncBaseTransport,

+ AsyncByteStream,

+ BaseTransport,

+ SyncByteStream,

+)

from ._transports.default import AsyncHTTPTransport, HTTPTransport

from ._transports.wsgi import WSGITransport

from ._types import (

AuthTypes,

- ByteStream,

CertTypes,

CookieTypes,

HeaderTypes,

@@ -53,7 +57,6 @@

get_environment_proxies,

get_logger,

same_origin,

- warn_deprecated,

)

# The type annotation for @classmethod and context managers here follows PEP 484

@@ -71,11 +74,65 @@

class ClientState(enum.Enum):

+ # UNOPENED:

+ # The client has been instantiated, but has not been used to send a request,

+ # or been opened by entering the context of a `with` block.

UNOPENED = 1

+ # OPENED:

+ # The client has either sent a request, or is within a `with` block.

OPENED = 2

+ # CLOSED:

+ # The client has either exited the `with` block, or `close()` has

+ # been called explicitly.

CLOSED = 3

+class BoundSyncStream(SyncByteStream):

+ """

+ A byte stream that is bound to a given response instance, and that

+ ensures the `response.elapsed` is set once the response is closed.

+ """

+

+ def __init__(

+ self, stream: SyncByteStream, response: Response, timer: Timer

+ ) -> None:

+ self._stream = stream

+ self._response = response

+ self._timer = timer

+

+ def __iter__(self) -> typing.Iterator[bytes]:

+ for chunk in self._stream:

+ yield chunk

+

+ def close(self) -> None:

+ seconds = self._timer.sync_elapsed()

+ self._response.elapsed = datetime.timedelta(seconds=seconds)

+ self._stream.close()

+

+

+class BoundAsyncStream(AsyncByteStream):

+ """

+ An async byte stream that is bound to a given response instance, and that

+ ensures the `response.elapsed` is set once the response is closed.

+ """

+

+ def __init__(

+ self, stream: AsyncByteStream, response: Response, timer: Timer

+ ) -> None:

+ self._stream = stream

+ self._response = response

+ self._timer = timer

+

+ async def __aiter__(self) -> typing.AsyncIterator[bytes]:

+ async for chunk in self._stream:

+ yield chunk

+

+ async def aclose(self) -> None:

+ seconds = await self._timer.async_elapsed()

+ self._response.elapsed = datetime.timedelta(seconds=seconds)

+ await self._stream.aclose()

+

+

class BaseClient:

def __init__(

self,

@@ -233,51 +290,6 @@ def params(self) -> QueryParams:

def params(self, params: QueryParamTypes) -> None:

self._params = QueryParams(params)

- def stream(

- self,

- method: str,

- url: URLTypes,

- *,

- content: RequestContent = None,

- data: RequestData = None,

- files: RequestFiles = None,

- json: typing.Any = None,

- params: QueryParamTypes = None,

- headers: HeaderTypes = None,

- cookies: CookieTypes = None,

- auth: typing.Union[AuthTypes, UnsetType] = UNSET,

- allow_redirects: bool = True,

- timeout: typing.Union[TimeoutTypes, UnsetType] = UNSET,

- ) -> "StreamContextManager":

- """

- Alternative to `httpx.request()` that streams the response body

- instead of loading it into memory at once.

-

- **Parameters**: See `httpx.request`.

-

- See also: [Streaming Responses][0]

-

- [0]: /quickstart#streaming-responses

- """

- request = self.build_request(

- method=method,

- url=url,

- content=content,

- data=data,

- files=files,

- json=json,

- params=params,

- headers=headers,

- cookies=cookies,

- )

- return StreamContextManager(

- client=self,

- request=request,

- auth=auth,

- allow_redirects=allow_redirects,

- timeout=timeout,

- )

-

def build_request(

self,

method: str,

@@ -325,10 +337,19 @@ def _merge_url(self, url: URLTypes) -> URL:

"""

merge_url = URL(url)

if merge_url.is_relative_url:

- # We always ensure the base_url paths include the trailing '/',

- # and always strip any leading '/' from the merge URL.

- merge_url = merge_url.copy_with(raw_path=merge_url.raw_path.lstrip(b"/"))

- return self.base_url.join(merge_url)

+ # To merge URLs we always append to the base URL. To get this

+ # behaviour correct we always ensure the base URL ends in a '/'

+ # seperator, and strip any leading '/' from the merge URL.

+ #

+ # So, eg...

+ #

+ # >>> client = Client(base_url="https://www.example.com/subpath")

+ # >>> client.base_url

+ # URL('https://www.example.com/subpath/')

+ # >>> client.build_request("GET", "/path").url

+ # URL('https://www.example.com/subpath/path')

+ merge_raw_path = self.base_url.raw_path + merge_url.raw_path.lstrip(b"/")

+ return self.base_url.copy_with(raw_path=merge_raw_path)

return merge_url

def _merge_cookies(

@@ -364,7 +385,7 @@ def _merge_queryparams(

"""

if params or self.params:

merged_queryparams = QueryParams(self.params)

- merged_queryparams.update(params)

+ merged_queryparams = merged_queryparams.merge(params)

return merged_queryparams

return params

@@ -494,7 +515,7 @@ def _redirect_headers(self, request: Request, url: URL, method: str) -> Headers:

def _redirect_stream(

self, request: Request, method: str

- ) -> typing.Optional[ByteStream]:

+ ) -> typing.Optional[typing.Union[SyncByteStream, AsyncByteStream]]:

"""

Return the body that should be used for the redirect request.

"""

@@ -527,7 +548,8 @@ class Client(BaseClient):

sending requests.

* **verify** - *(optional)* SSL certificates (a.k.a CA bundle) used to

verify the identity of requested hosts. Either `True` (default CA bundle),

- a path to an SSL certificate file, or `False` (disable verification).

+ a path to an SSL certificate file, an `ssl.SSLContext`, or `False`

+ (which will disable verification).

* **cert** - *(optional)* An SSL certificate used by the requested host

to authenticate the client. Either a path to an SSL certificate file, or

two-tuple of (certificate file, key file), or a three-tuple of (certificate

@@ -560,14 +582,13 @@ def __init__(

cert: CertTypes = None,

http2: bool = False,

proxies: ProxiesTypes = None,

- mounts: typing.Mapping[str, httpcore.SyncHTTPTransport] = None,

+ mounts: typing.Mapping[str, BaseTransport] = None,

timeout: TimeoutTypes = DEFAULT_TIMEOUT_CONFIG,

limits: Limits = DEFAULT_LIMITS,

- pool_limits: Limits = None,

max_redirects: int = DEFAULT_MAX_REDIRECTS,

event_hooks: typing.Mapping[str, typing.List[typing.Callable]] = None,

base_url: URLTypes = "",

- transport: httpcore.SyncHTTPTransport = None,

+ transport: BaseTransport = None,

app: typing.Callable = None,

trust_env: bool = True,

):

@@ -592,13 +613,6 @@ def __init__(

"Make sure to install httpx using `pip install httpx[http2]`."

) from None

- if pool_limits is not None:

- warn_deprecated(

- "Client(..., pool_limits=...) is deprecated and will raise "

- "errors in the future. Use Client(..., limits=...) instead."

- )

- limits = pool_limits

-

allow_env_proxies = trust_env and app is None and transport is None

proxy_map = self._get_proxy_map(proxies, allow_env_proxies)

@@ -611,9 +625,7 @@ def __init__(

app=app,

trust_env=trust_env,

)

- self._mounts: typing.Dict[

- URLPattern, typing.Optional[httpcore.SyncHTTPTransport]

- ] = {

+ self._mounts: typing.Dict[URLPattern, typing.Optional[BaseTransport]] = {

URLPattern(key): None

if proxy is None

else self._init_proxy_transport(

@@ -639,10 +651,10 @@ def _init_transport(

cert: CertTypes = None,

http2: bool = False,

limits: Limits = DEFAULT_LIMITS,

- transport: httpcore.SyncHTTPTransport = None,

+ transport: BaseTransport = None,

app: typing.Callable = None,

trust_env: bool = True,

- ) -> httpcore.SyncHTTPTransport:

+ ) -> BaseTransport:

if transport is not None:

return transport

@@ -661,7 +673,7 @@ def _init_proxy_transport(

http2: bool = False,

limits: Limits = DEFAULT_LIMITS,

trust_env: bool = True,

- ) -> httpcore.SyncHTTPTransport:

+ ) -> BaseTransport:

return HTTPTransport(

verify=verify,

cert=cert,

@@ -671,7 +683,7 @@ def _init_proxy_transport(

proxy=proxy,

)

- def _transport_for_url(self, url: URL) -> httpcore.SyncHTTPTransport:

+ def _transport_for_url(self, url: URL) -> BaseTransport:

"""

Returns the transport instance that should be used for a given URL.

This will either be the standard connection pool, or a proxy.

@@ -714,6 +726,14 @@ def request(

[0]: /advanced/#merging-of-configuration

"""

+ if cookies is not None:

+ message = (

+ "Setting per-request cookies=<...> is being deprecated, because "

+ "the expected behaviour on cookie persistence is ambiguous. Set "

+ "cookies directly on the client instance instead."

+ )

+ warnings.warn(message, DeprecationWarning)

+

request = self.build_request(

method=method,

url=url,

@@ -729,6 +749,56 @@ def request(

request, auth=auth, allow_redirects=allow_redirects, timeout=timeout

)

+ @contextmanager

+ def stream(

+ self,

+ method: str,

+ url: URLTypes,

+ *,

+ content: RequestContent = None,

+ data: RequestData = None,

+ files: RequestFiles = None,

+ json: typing.Any = None,

+ params: QueryParamTypes = None,

+ headers: HeaderTypes = None,

+ cookies: CookieTypes = None,

+ auth: typing.Union[AuthTypes, UnsetType] = UNSET,

+ allow_redirects: bool = True,

+ timeout: typing.Union[TimeoutTypes, UnsetType] = UNSET,

+ ) -> typing.Iterator[Response]:

+ """

+ Alternative to `httpx.request()` that streams the response body

+ instead of loading it into memory at once.

+

+ **Parameters**: See `httpx.request`.

+

+ See also: [Streaming Responses][0]

+

+ [0]: /quickstart#streaming-responses

+ """

+ request = self.build_request(

+ method=method,

+ url=url,

+ content=content,

+ data=data,

+ files=files,

+ json=json,

+ params=params,

+ headers=headers,

+ cookies=cookies,

+ )

+ response = self.send(

+ request=request,

+ auth=auth,

+ allow_redirects=allow_redirects,

+ timeout=timeout,

+ stream=True,

+ )

+ try:

+ yield response

+ finally:

+ response.close()

+

def send(

self,

request: Request,

@@ -766,21 +836,18 @@ def send(

allow_redirects=allow_redirects,

history=[],

)

-

- if not stream:

- try:

+ try:

+ if not stream:

response.read()

- finally:

- response.close()

- try:

for hook in self._event_hooks["response"]:

hook(response)

- except Exception:

- response.close()

- raise

- return response

+ return response

+

+ except Exception as exc:

+ response.close()

+ raise exc

def _send_handling_auth(

self,

@@ -804,18 +871,20 @@ def _send_handling_auth(

history=history,

)

try:

- next_request = auth_flow.send(response)

- except StopIteration:

- return response

- except BaseException as exc:

- response.close()

- raise exc from None

- else:

+ try:

+ next_request = auth_flow.send(response)

+ except StopIteration:

+ return response

+

response.history = list(history)

response.read()

request = next_request

history.append(response)

+ except Exception as exc:

+ response.close()

+ raise exc

+

def _send_handling_redirects(

self,

request: Request,

@@ -830,19 +899,24 @@ def _send_handling_redirects(

)

response = self._send_single_request(request, timeout)

- response.history = list(history)

+ try:

+ response.history = list(history)

- if not response.is_redirect:

- return response

+ if not response.is_redirect:

+ return response

- if allow_redirects:

- response.read()

- request = self._build_redirect_request(request, response)

- history = history + [response]

+ request = self._build_redirect_request(request, response)

+ history = history + [response]

- if not allow_redirects:

- response.next_request = request

- return response

+ if allow_redirects:

+ response.read()

+ else:

+ response.next_request = request

+ return response

+

+ except Exception as exc:

+ response.close()

+ raise exc

def _send_single_request(self, request: Request, timeout: Timeout) -> Response:

"""

@@ -852,29 +926,29 @@ def _send_single_request(self, request: Request, timeout: Timeout) -> Response:

timer = Timer()

timer.sync_start()

- with map_exceptions(HTTPCORE_EXC_MAP, request=request):

- (status_code, headers, stream, ext) = transport.request(

+ if not isinstance(request.stream, SyncByteStream):

+ raise RuntimeError(

+ "Attempted to send an async request with a sync Client instance."

+ )

+

+ with request_context(request=request):

+ (status_code, headers, stream, extensions) = transport.handle_request(

request.method.encode(),

request.url.raw,

headers=request.headers.raw,

- stream=request.stream, # type: ignore

- ext={"timeout": timeout.as_dict()},

+ stream=request.stream,

+ extensions={"timeout": timeout.as_dict()},

)

- def on_close(response: Response) -> None:

- response.elapsed = datetime.timedelta(seconds=timer.sync_elapsed())

- if hasattr(stream, "close"):

- stream.close()

-

response = Response(

status_code,

headers=headers,

- stream=stream, # type: ignore

- ext=ext,

+ stream=stream,

+ extensions=extensions,

request=request,

- on_close=on_close,

)

+ response.stream = BoundSyncStream(stream, response=response, timer=timer)

self.cookies.extract_cookies(response)

status = f"{response.status_code} {response.reason_phrase}"

@@ -1131,7 +1205,8 @@ def __exit__(

transport.__exit__(exc_type, exc_value, traceback)

def __del__(self) -> None:

- self.close()

+ if self._state == ClientState.OPENED:

+ self.close()

class AsyncClient(BaseClient):

@@ -1193,14 +1268,13 @@ def __init__(

cert: CertTypes = None,

http2: bool = False,

proxies: ProxiesTypes = None,

- mounts: typing.Mapping[str, httpcore.AsyncHTTPTransport] = None,

+ mounts: typing.Mapping[str, AsyncBaseTransport] = None,

timeout: TimeoutTypes = DEFAULT_TIMEOUT_CONFIG,

limits: Limits = DEFAULT_LIMITS,

- pool_limits: Limits = None,

max_redirects: int = DEFAULT_MAX_REDIRECTS,

event_hooks: typing.Mapping[str, typing.List[typing.Callable]] = None,

base_url: URLTypes = "",

- transport: httpcore.AsyncHTTPTransport = None,

+ transport: AsyncBaseTransport = None,

app: typing.Callable = None,

trust_env: bool = True,

):

@@ -1225,13 +1299,6 @@ def __init__(

"Make sure to install httpx using `pip install httpx[http2]`."

) from None

- if pool_limits is not None:

- warn_deprecated(

- "AsyncClient(..., pool_limits=...) is deprecated and will raise "

- "errors in the future. Use AsyncClient(..., limits=...) instead."

- )

- limits = pool_limits

-

allow_env_proxies = trust_env and app is None and transport is None

proxy_map = self._get_proxy_map(proxies, allow_env_proxies)

@@ -1245,9 +1312,7 @@ def __init__(

trust_env=trust_env,

)

- self._mounts: typing.Dict[

- URLPattern, typing.Optional[httpcore.AsyncHTTPTransport]

- ] = {

+ self._mounts: typing.Dict[URLPattern, typing.Optional[AsyncBaseTransport]] = {

URLPattern(key): None

if proxy is None

else self._init_proxy_transport(

@@ -1272,10 +1337,10 @@ def _init_transport(

cert: CertTypes = None,

http2: bool = False,

limits: Limits = DEFAULT_LIMITS,

- transport: httpcore.AsyncHTTPTransport = None,

+ transport: AsyncBaseTransport = None,

app: typing.Callable = None,

trust_env: bool = True,

- ) -> httpcore.AsyncHTTPTransport:

+ ) -> AsyncBaseTransport:

if transport is not None:

return transport

@@ -1294,7 +1359,7 @@ def _init_proxy_transport(

http2: bool = False,

limits: Limits = DEFAULT_LIMITS,

trust_env: bool = True,

- ) -> httpcore.AsyncHTTPTransport:

+ ) -> AsyncBaseTransport:

return AsyncHTTPTransport(

verify=verify,

cert=cert,

@@ -1304,7 +1369,7 @@ def _init_proxy_transport(

proxy=proxy,

)

- def _transport_for_url(self, url: URL) -> httpcore.AsyncHTTPTransport:

+ def _transport_for_url(self, url: URL) -> AsyncBaseTransport:

"""

Returns the transport instance that should be used for a given URL.

This will either be the standard connection pool, or a proxy.

@@ -1363,6 +1428,56 @@ async def request(

)

return response

+ @asynccontextmanager

+ async def stream(

+ self,

+ method: str,

+ url: URLTypes,

+ *,

+ content: RequestContent = None,

+ data: RequestData = None,

+ files: RequestFiles = None,

+ json: typing.Any = None,

+ params: QueryParamTypes = None,

+ headers: HeaderTypes = None,

+ cookies: CookieTypes = None,

+ auth: typing.Union[AuthTypes, UnsetType] = UNSET,

+ allow_redirects: bool = True,

+ timeout: typing.Union[TimeoutTypes, UnsetType] = UNSET,

+ ) -> typing.AsyncIterator[Response]:

+ """

+ Alternative to `httpx.request()` that streams the response body

+ instead of loading it into memory at once.

+

+ **Parameters**: See `httpx.request`.

+

+ See also: [Streaming Responses][0]

+

+ [0]: /quickstart#streaming-responses

+ """

+ request = self.build_request(

+ method=method,

+ url=url,

+ content=content,

+ data=data,

+ files=files,

+ json=json,

+ params=params,

+ headers=headers,

+ cookies=cookies,

+ )

+ response = await self.send(

+ request=request,

+ auth=auth,

+ allow_redirects=allow_redirects,

+ timeout=timeout,

+ stream=True,

+ )

+ try:

+ yield response

+ finally:

+ await response.aclose()

+

async def send(

self,

request: Request,

@@ -1400,21 +1515,18 @@ async def send(

allow_redirects=allow_redirects,

history=[],

)

-

- if not stream:

- try:

+ try:

+ if not stream:

await response.aread()

- finally:

- await response.aclose()

- try:

for hook in self._event_hooks["response"]:

await hook(response)

- except Exception:

- await response.aclose()

- raise

- return response

+ return response

+

+ except Exception as exc:

+ await response.aclose()

+ raise exc

async def _send_handling_auth(

self,

@@ -1438,18 +1550,20 @@ async def _send_handling_auth(

history=history,

)

try:

- next_request = await auth_flow.asend(response)

- except StopAsyncIteration:

- return response

- except BaseException as exc:

- await response.aclose()

- raise exc from None

- else:

+ try:

+ next_request = await auth_flow.asend(response)

+ except StopAsyncIteration:

+ return response

+

response.history = list(history)

await response.aread()

request = next_request

history.append(response)

+ except Exception as exc:

+ await response.aclose()

+ raise exc

+

async def _send_handling_redirects(

self,

request: Request,

@@ -1464,19 +1578,24 @@ async def _send_handling_redirects(

)

response = await self._send_single_request(request, timeout)

- response.history = list(history)

+ try:

+ response.history = list(history)

- if not response.is_redirect:

- return response

+ if not response.is_redirect:

+ return response

- if allow_redirects:

- await response.aread()

- request = self._build_redirect_request(request, response)

- history = history + [response]

+ request = self._build_redirect_request(request, response)

+ history = history + [response]

- if not allow_redirects:

- response.next_request = request

- return response

+ if allow_redirects:

+ await response.aread()

+ else:

+ response.next_request = request

+ return response

+

+ except Exception as exc:

+ await response.aclose()

+ raise exc

async def _send_single_request(

self, request: Request, timeout: Timeout

@@ -1488,30 +1607,34 @@ async def _send_single_request(

timer = Timer()

await timer.async_start()

- with map_exceptions(HTTPCORE_EXC_MAP, request=request):

- (status_code, headers, stream, ext) = await transport.arequest(

+ if not isinstance(request.stream, AsyncByteStream):

+ raise RuntimeError(

+ "Attempted to send an sync request with an AsyncClient instance."

+ )

+

+ with request_context(request=request):

+ (

+ status_code,

+ headers,

+ stream,

+ extensions,

+ ) = await transport.handle_async_request(

request.method.encode(),

request.url.raw,

headers=request.headers.raw,

- stream=request.stream, # type: ignore

- ext={"timeout": timeout.as_dict()},

+ stream=request.stream,

+ extensions={"timeout": timeout.as_dict()},

)

- async def on_close(response: Response) -> None:

- response.elapsed = datetime.timedelta(seconds=await timer.async_elapsed())

- if hasattr(stream, "aclose"):

- with map_exceptions(HTTPCORE_EXC_MAP, request=request):

- await stream.aclose()

-

response = Response(

status_code,

headers=headers,

- stream=stream, # type: ignore

- ext=ext,

+ stream=stream,

+ extensions=extensions,

request=request,

- on_close=on_close,

)

+ response.stream = BoundAsyncStream(stream, response=response, timer=timer)

self.cookies.extract_cookies(response)

status = f"{response.status_code} {response.reason_phrase}"

@@ -1769,69 +1892,28 @@ async def __aexit__(

def __del__(self) -> None:

if self._state == ClientState.OPENED:

+ # Unlike the sync case, we cannot silently close the client when

+ # it is garbage collected, because `.aclose()` is an async operation,

+ # but `__del__` is not.

+ #

+ # For this reason we require explicit close management for

+ # `AsyncClient`, and issue a warning on unclosed clients.

+ #

+ # The context managed style is usually preferable, because it neatly

+ # ensures proper resource cleanup:

+ #

+ # async with httpx.AsyncClient() as client:

+ # ...

+ #

+ # However, an explicit call to `aclose()` is also sufficient:

+ #

+ # client = httpx.AsyncClient()

+ # try:

+ # ...

+ # finally:

+ # await client.aclose()

warnings.warn(

f"Unclosed {self!r}. "

"See https://www.python-httpx.org/async/#opening-and-closing-clients "

"for details."

)

-

-

-class StreamContextManager:

- def __init__(

- self,

- client: BaseClient,

- request: Request,

- *,

- auth: typing.Union[AuthTypes, UnsetType] = UNSET,

- allow_redirects: bool = True,

- timeout: typing.Union[TimeoutTypes, UnsetType] = UNSET,

- close_client: bool = False,

- ) -> None:

- self.client = client

- self.request = request

- self.auth = auth

- self.allow_redirects = allow_redirects

- self.timeout = timeout

- self.close_client = close_client

-

- def __enter__(self) -> "Response":

- assert isinstance(self.client, Client)

- self.response = self.client.send(

- request=self.request,

- auth=self.auth,

- allow_redirects=self.allow_redirects,

- timeout=self.timeout,

- stream=True,

- )

- return self.response

-

- def __exit__(

- self,

- exc_type: typing.Type[BaseException] = None,

- exc_value: BaseException = None,

- traceback: TracebackType = None,

- ) -> None:

- assert isinstance(self.client, Client)

- self.response.close()

- if self.close_client:

- self.client.close()

-

- async def __aenter__(self) -> "Response":

- assert isinstance(self.client, AsyncClient)

- self.response = await self.client.send(

- request=self.request,

- auth=self.auth,

- allow_redirects=self.allow_redirects,

- timeout=self.timeout,

- stream=True,

- )

- return self.response

-

- async def __aexit__(

- self,

- exc_type: typing.Type[BaseException] = None,

- exc_value: BaseException = None,

- traceback: TracebackType = None,

- ) -> None:

- assert isinstance(self.client, AsyncClient)

- await self.response.aclose()

diff --git a/httpx/_compat.py b/httpx/_compat.py

new file mode 100644

index 0000000000..47c12ba199

--- /dev/null

+++ b/httpx/_compat.py

@@ -0,0 +1,6 @@

+# `contextlib.asynccontextmanager` exists from Python 3.7 onwards.

+# For 3.6 we require the `async_generator` package for a backported version.

+try:

+ from contextlib import asynccontextmanager # type: ignore

+except ImportError: # pragma: no cover

+ from async_generator import asynccontextmanager # type: ignore # noqa

diff --git a/httpx/_content.py b/httpx/_content.py

index bf402c9e29..9c7c1ff225 100644

--- a/httpx/_content.py

+++ b/httpx/_content.py

@@ -1,4 +1,5 @@

import inspect

+import warnings

from json import dumps as json_dumps

from typing import (

Any,

@@ -12,93 +13,86 @@

)