## **ATTENTION: Our code base in this repo assumes you:**

-A. You have 2D videos and a DeepLabCut network to analyze them as described in the [main documentation](overview). This can be with multiple separate networks for each camera (less recommended), or one network trained on all views - recommended! (See [Nath*, Mathis* et al., 2019](https://www.biorxiv.org/content/10.1101/476531v1)). We also support multi-animal 3D with this code (please see [Lauer et al. 2022](https://doi.org/10.1038/s41592-022-01443-0)).

+A. You have 2D videos and a DeepLabCut network to analyze them as described in the

+[main documentation](overview). This can be with multiple

+separate networks for each camera (less recommended), or one network trained on all views - recommended! (See

+[Nath*, Mathis* et al., 2019](https://www.biorxiv.org/content/10.1101/476531v1)). We also support multi-animal 3D with this code (please see

+[Lauer et al. 2022](https://doi.org/10.1038/s41592-022-01443-0)).

B. You are using 2 cameras, in a [stereo configuration](https://github.com/DeepLabCut/DeepLabCut/blob/5ac4c8cb6bcf2314a3abfcf979b8dd170608e094/deeplabcut/pose_estimation_3d/camera_calibration.py#L223), for 3D*.

@@ -20,12 +27,16 @@ Here are other excellent options for you to use that extend DeepLabCut:

## **ATTENTION: Our code base in this repo assumes you:**

-A. You have 2D videos and a DeepLabCut network to analyze them as described in the [main documentation](overview). This can be with multiple separate networks for each camera (less recommended), or one network trained on all views - recommended! (See [Nath*, Mathis* et al., 2019](https://www.biorxiv.org/content/10.1101/476531v1)). We also support multi-animal 3D with this code (please see [Lauer et al. 2022](https://doi.org/10.1038/s41592-022-01443-0)).

+A. You have 2D videos and a DeepLabCut network to analyze them as described in the

+[main documentation](overview). This can be with multiple

+separate networks for each camera (less recommended), or one network trained on all views - recommended! (See

+[Nath*, Mathis* et al., 2019](https://www.biorxiv.org/content/10.1101/476531v1)). We also support multi-animal 3D with this code (please see

+[Lauer et al. 2022](https://doi.org/10.1038/s41592-022-01443-0)).

B. You are using 2 cameras, in a [stereo configuration](https://github.com/DeepLabCut/DeepLabCut/blob/5ac4c8cb6bcf2314a3abfcf979b8dd170608e094/deeplabcut/pose_estimation_3d/camera_calibration.py#L223), for 3D*.

@@ -20,12 +27,16 @@ Here are other excellent options for you to use that extend DeepLabCut:

-- **[AcinoSet](https://github.com/African-Robotics-Unit/AcinoSet)**; **n**-camera support with triangulation, extended Kalman filtering, and trajectory optimization code (see video to the right for a min demo, courtesy of Prof. Patel), plus a GUI to visualize 3D data. It is built to work directly with DeepLabCut (but currently tailored to cheetah's, thus some coding skills are required at this time).

+- **[AcinoSet](https://github.com/African-Robotics-Unit/AcinoSet)**; **n**-camera support with triangulation, extended Kalman filtering, and trajectory optimization

+code (see video to the right for a min demo, courtesy of Prof. Patel), plus a GUI to visualize 3D data. It is built to

+work directly with DeepLabCut (but currently tailored to cheetah's, thus some coding skills are required at this time).

-- **[anipose.org](https://anipose.readthedocs.io/en/latest/)**; a wrapper for 3D deeplabcut that provides >3 camera support and is built to work directly with DeepLabCut. You can `pip install anipose` into your DLC conda environment.

+- **[anipose.org](https://anipose.readthedocs.io/en/latest/)**; a wrapper for 3D deeplabcut that provides >3 camera support and is built to work directly with

+DeepLabCut. You can `pip install anipose` into your DLC conda environment.

-- **Argus, easywand or DLTdv** w/DeepLabCut see https://github.com/haliaetus13/DLCconverterDLT; this can be used with the the highly popular Argus or DLTdv tools for wand calibration.

+- **Argus, easywand or DLTdv** w/DeepLabCut see https://github.com/haliaetus13/DLCconverterDLT; this can be used with

+the the highly popular Argus or DLTdv tools for wand calibration.

## Jump in with direct DeepLabCut 2-camera support:

@@ -39,83 +50,118 @@ Here are other excellent options for you to use that extend DeepLabCut:

### (1) Create a New 3D Project:

-Watch a [DEMO VIDEO](https://youtu.be/Eh6oIGE4dwI) on how to use this code, and check out the Notebook [here](https://github.com/DeepLabCut/DeepLabCut/blob/master/examples/JUPYTER/Demo_3D_DeepLabCut.ipynb)!

+Watch a [DEMO VIDEO](https://youtu.be/Eh6oIGE4dwI) on how to use this code, and check out the Notebook [here](https://github.com/DeepLabCut/DeepLabCut/blob/main/examples/JUPYTER/Demo_3D_DeepLabCut.ipynb)!

-You will run this function **one** time per project; a project is defined as a given set of cameras and calibration images. You can always analyze new videos within this project.

+You will run this function **one** time per project; a project is defined as a given set of cameras and calibration

+images. You can always analyze new videos within this project.

-The function **create\_new\_project\_3d** creates a new project directory specifically for converting the 2D pose to 3D pose, required subdirectories, and a basic 3D project configuration file. Each project is identified by the name of the project (e.g. Task1), name of the experimenter (e.g. YourName), as well as the date at creation.

+The function **create\_new\_project\_3d** creates a new project directory specifically for converting the 2D pose to 3D

+pose, required subdirectories, and a basic 3D project configuration file. Each project is identified by the name of the

+project (e.g. Task1), name of the experimenter (e.g. YourName), as well as the date at creation.

-Thus, this function requires the user to input the enter the name of the project, the name of the experimenter and number of cameras to be used. Currently, DeepLabCut supports triangulation using 2 cameras, but will expand to more than 2 cameras in a future version.

+Thus, this function requires the user to enter the name of the project, the name of the experimenter and number of

+cameras to be used. Currently, DeepLabCut supports triangulation using 2 cameras, but will expand to more than 2 cameras

+in a future version.

To start a 3D project type the following in ipython:

```python

-deeplabcut.create_new_project_3d('ProjectName','NameofLabeler',num_cameras = 2)

+deeplabcut.create_new_project_3d("ProjectName", "NameofLabeler", num_cameras=2)

```

-TIP 1: you can also pass ``working_directory=`Full path of the working directory'`` if you want to place this folder somewhere beside the current directory you are working in. If the optional argument ``working_directory`` is unspecified, the project directory is created in the current working directory.

+TIP 1: you can also pass `working_directory="Full path of the working directory"` if you want to place this folder

+somewhere beside the current directory you are working in. If the optional argument `working_directory` is unspecified,

+the project directory is created in the current working directory.

-TIP 2: you can also place ``config_path3d`` in front of ``deeplabcut.create_new_project_3d`` to create a variable that holds the path to the config.yaml file, i.e. ``config_path3d=deeplabcut.create_new_project_3d(...`` Or, set this variable for easy use. Please note that ``config_path3d='Full path of the 3D project configuration file'``.

+TIP 2: you can also place `config_path3d` in front of `deeplabcut.create_new_project_3d` to create a variable that holds

+the path to the config.yaml file, i.e. `config_path3d=deeplabcut.create_new_project_3d(...` Or, set this variable for

+easy use. Please note that `config_path3d='Full path of the 3D project configuration file'`.

- This function will create a project directory with the name **Name of the project+name of the experimenter+date of creation of the project+3d** in the **Working directory**. The project directory will have subdirectories: **calibration_images**, **camera_matrix**, **corners**, and **undistortion**. All the outputs generated during the course of a project will be stored in one of these subdirectories, thus allowing each project to be curated in separation from other projects.

+This function will create a project directory with the name **Name of the project+name of the experimenter+date of

+creation of the project+3d** in the **Working directory**. The project directory will have subdirectories:

+**calibration_images**, **camera_matrix**, **corners**, and **undistortion**. All the outputs generated during the

+course of a project will be stored in one of these subdirectories, thus allowing each project to be curated in

+separation from other projects.

- The purpose of the subdirectories is as follows:

+The purpose of the subdirectories is as follows:

- **calibration_images:** This directory will contain a set of calibration images acquired from the two cameras. A calibration image can be acquired using a printed checkerboard and its pair wise images are taken from both the cameras to consider as a set of calibration images. These pair of images are saved as ``.jpg`` with camera names as the prefix. e.g. ``camera-1-01.jpg`` and ``camera-2-01.jpg`` for the first pair of images. While taking the images:

-- Keep the orientation of the chessboard same and do not rotate more than 30 degrees. Rotating the chessboard circular will change the origin across the frames and may result in incorrect order of detected corners.

-- Cover several distances, and within each distance, cover all parts of the image view (all corners and center).

-Use a chessboard as big as possible, ideally a chessboard with of at least 8x6 squares.

-- Aim for taking at least 70 pair of images as after corner detection, some of the images might need to be discarded due to either incorrect corner detection or incorrect order of detected corners.

+**calibration_images:** This directory will contain a set of calibration images acquired from the two cameras. A

+calibration image can be acquired using a printed checkerboard and its pair wise images are taken from both the cameras

+to consider as a set of calibration images.

- **camera_matrix:** This directory will store the parameter for both the cameras as a pickle file. Specifically, these pickle files contain the intrinsic and extrinsic camera parameters. While the intrinsic parameters represent a transformation from 3-D camera's coordinates into the image coordinates, the extrinsic parameters represent a rigid transformation from world coordinate system to the 3-D camera's coordinate system.

+**camera_matrix:** This directory will store the parameter for both the cameras as a pickle file. Specifically, these

+pickle files contain the intrinsic and extrinsic camera parameters. While the intrinsic parameters represent a

+transformation from 3-D camera's coordinates into the image coordinates, the extrinsic parameters represent a rigid

+transformation from world coordinate system to the 3-D camera's coordinate system.

- **corners:** As a part of camera calibration, the checkerboard pattern is detected in the calibration images and these patterns will be stored in this directory. Each row of the checkerboard grid is marked with a unique color.

+**corners:** As a part of camera calibration, the checkerboard pattern is detected in the calibration images and these

+patterns will be stored in this directory. Each row of the checkerboard grid is marked with a unique color.

- **undistortion:** In order to check for calibration, the calibration images and the corresponding corner points are undistorted. These undistorted images are overlaid with undistorted points and will be stored in this directory.

+**undistortion:** In order to check for calibration, the calibration images and the corresponding corner points are

+undistorted. These undistorted images are overlaid with undistorted points and will be stored in this directory.

- Here is an overview of the calibration and triangulation workflow that follows:

+Here is an overview of the calibration and triangulation workflow that follows:

-

-- **[AcinoSet](https://github.com/African-Robotics-Unit/AcinoSet)**; **n**-camera support with triangulation, extended Kalman filtering, and trajectory optimization code (see video to the right for a min demo, courtesy of Prof. Patel), plus a GUI to visualize 3D data. It is built to work directly with DeepLabCut (but currently tailored to cheetah's, thus some coding skills are required at this time).

+- **[AcinoSet](https://github.com/African-Robotics-Unit/AcinoSet)**; **n**-camera support with triangulation, extended Kalman filtering, and trajectory optimization

+code (see video to the right for a min demo, courtesy of Prof. Patel), plus a GUI to visualize 3D data. It is built to

+work directly with DeepLabCut (but currently tailored to cheetah's, thus some coding skills are required at this time).

-- **[anipose.org](https://anipose.readthedocs.io/en/latest/)**; a wrapper for 3D deeplabcut that provides >3 camera support and is built to work directly with DeepLabCut. You can `pip install anipose` into your DLC conda environment.

+- **[anipose.org](https://anipose.readthedocs.io/en/latest/)**; a wrapper for 3D deeplabcut that provides >3 camera support and is built to work directly with

+DeepLabCut. You can `pip install anipose` into your DLC conda environment.

-- **Argus, easywand or DLTdv** w/DeepLabCut see https://github.com/haliaetus13/DLCconverterDLT; this can be used with the the highly popular Argus or DLTdv tools for wand calibration.

+- **Argus, easywand or DLTdv** w/DeepLabCut see https://github.com/haliaetus13/DLCconverterDLT; this can be used with

+the the highly popular Argus or DLTdv tools for wand calibration.

## Jump in with direct DeepLabCut 2-camera support:

@@ -39,83 +50,118 @@ Here are other excellent options for you to use that extend DeepLabCut:

### (1) Create a New 3D Project:

-Watch a [DEMO VIDEO](https://youtu.be/Eh6oIGE4dwI) on how to use this code, and check out the Notebook [here](https://github.com/DeepLabCut/DeepLabCut/blob/master/examples/JUPYTER/Demo_3D_DeepLabCut.ipynb)!

+Watch a [DEMO VIDEO](https://youtu.be/Eh6oIGE4dwI) on how to use this code, and check out the Notebook [here](https://github.com/DeepLabCut/DeepLabCut/blob/main/examples/JUPYTER/Demo_3D_DeepLabCut.ipynb)!

-You will run this function **one** time per project; a project is defined as a given set of cameras and calibration images. You can always analyze new videos within this project.

+You will run this function **one** time per project; a project is defined as a given set of cameras and calibration

+images. You can always analyze new videos within this project.

-The function **create\_new\_project\_3d** creates a new project directory specifically for converting the 2D pose to 3D pose, required subdirectories, and a basic 3D project configuration file. Each project is identified by the name of the project (e.g. Task1), name of the experimenter (e.g. YourName), as well as the date at creation.

+The function **create\_new\_project\_3d** creates a new project directory specifically for converting the 2D pose to 3D

+pose, required subdirectories, and a basic 3D project configuration file. Each project is identified by the name of the

+project (e.g. Task1), name of the experimenter (e.g. YourName), as well as the date at creation.

-Thus, this function requires the user to input the enter the name of the project, the name of the experimenter and number of cameras to be used. Currently, DeepLabCut supports triangulation using 2 cameras, but will expand to more than 2 cameras in a future version.

+Thus, this function requires the user to enter the name of the project, the name of the experimenter and number of

+cameras to be used. Currently, DeepLabCut supports triangulation using 2 cameras, but will expand to more than 2 cameras

+in a future version.

To start a 3D project type the following in ipython:

```python

-deeplabcut.create_new_project_3d('ProjectName','NameofLabeler',num_cameras = 2)

+deeplabcut.create_new_project_3d("ProjectName", "NameofLabeler", num_cameras=2)

```

-TIP 1: you can also pass ``working_directory=`Full path of the working directory'`` if you want to place this folder somewhere beside the current directory you are working in. If the optional argument ``working_directory`` is unspecified, the project directory is created in the current working directory.

+TIP 1: you can also pass `working_directory="Full path of the working directory"` if you want to place this folder

+somewhere beside the current directory you are working in. If the optional argument `working_directory` is unspecified,

+the project directory is created in the current working directory.

-TIP 2: you can also place ``config_path3d`` in front of ``deeplabcut.create_new_project_3d`` to create a variable that holds the path to the config.yaml file, i.e. ``config_path3d=deeplabcut.create_new_project_3d(...`` Or, set this variable for easy use. Please note that ``config_path3d='Full path of the 3D project configuration file'``.

+TIP 2: you can also place `config_path3d` in front of `deeplabcut.create_new_project_3d` to create a variable that holds

+the path to the config.yaml file, i.e. `config_path3d=deeplabcut.create_new_project_3d(...` Or, set this variable for

+easy use. Please note that `config_path3d='Full path of the 3D project configuration file'`.

- This function will create a project directory with the name **Name of the project+name of the experimenter+date of creation of the project+3d** in the **Working directory**. The project directory will have subdirectories: **calibration_images**, **camera_matrix**, **corners**, and **undistortion**. All the outputs generated during the course of a project will be stored in one of these subdirectories, thus allowing each project to be curated in separation from other projects.

+This function will create a project directory with the name **Name of the project+name of the experimenter+date of

+creation of the project+3d** in the **Working directory**. The project directory will have subdirectories:

+**calibration_images**, **camera_matrix**, **corners**, and **undistortion**. All the outputs generated during the

+course of a project will be stored in one of these subdirectories, thus allowing each project to be curated in

+separation from other projects.

- The purpose of the subdirectories is as follows:

+The purpose of the subdirectories is as follows:

- **calibration_images:** This directory will contain a set of calibration images acquired from the two cameras. A calibration image can be acquired using a printed checkerboard and its pair wise images are taken from both the cameras to consider as a set of calibration images. These pair of images are saved as ``.jpg`` with camera names as the prefix. e.g. ``camera-1-01.jpg`` and ``camera-2-01.jpg`` for the first pair of images. While taking the images:

-- Keep the orientation of the chessboard same and do not rotate more than 30 degrees. Rotating the chessboard circular will change the origin across the frames and may result in incorrect order of detected corners.

-- Cover several distances, and within each distance, cover all parts of the image view (all corners and center).

-Use a chessboard as big as possible, ideally a chessboard with of at least 8x6 squares.

-- Aim for taking at least 70 pair of images as after corner detection, some of the images might need to be discarded due to either incorrect corner detection or incorrect order of detected corners.

+**calibration_images:** This directory will contain a set of calibration images acquired from the two cameras. A

+calibration image can be acquired using a printed checkerboard and its pair wise images are taken from both the cameras

+to consider as a set of calibration images.

- **camera_matrix:** This directory will store the parameter for both the cameras as a pickle file. Specifically, these pickle files contain the intrinsic and extrinsic camera parameters. While the intrinsic parameters represent a transformation from 3-D camera's coordinates into the image coordinates, the extrinsic parameters represent a rigid transformation from world coordinate system to the 3-D camera's coordinate system.

+**camera_matrix:** This directory will store the parameter for both the cameras as a pickle file. Specifically, these

+pickle files contain the intrinsic and extrinsic camera parameters. While the intrinsic parameters represent a

+transformation from 3-D camera's coordinates into the image coordinates, the extrinsic parameters represent a rigid

+transformation from world coordinate system to the 3-D camera's coordinate system.

- **corners:** As a part of camera calibration, the checkerboard pattern is detected in the calibration images and these patterns will be stored in this directory. Each row of the checkerboard grid is marked with a unique color.

+**corners:** As a part of camera calibration, the checkerboard pattern is detected in the calibration images and these

+patterns will be stored in this directory. Each row of the checkerboard grid is marked with a unique color.

- **undistortion:** In order to check for calibration, the calibration images and the corresponding corner points are undistorted. These undistorted images are overlaid with undistorted points and will be stored in this directory.

+**undistortion:** In order to check for calibration, the calibration images and the corresponding corner points are

+undistorted. These undistorted images are overlaid with undistorted points and will be stored in this directory.

- Here is an overview of the calibration and triangulation workflow that follows:

+Here is an overview of the calibration and triangulation workflow that follows:

- +

@@ -213,22 +296,36 @@ The **triangulated file** is now saved under the same directory where the video

### (5) Visualize your 3D DeepLabCut Videos:

-In order to visualize both the 2D videos with tracked points plut the pose in 3D, the user can create a 3D video for certain frames (these are large files, so we advise just looking at a subset of frames). The user can specify the config file, the **path of the triangulated file folder**, and specify the start and end frame indices to create a 3D labeled video. Note that the ``triangulated_file_folder`` is where the newly created file that ends with ``yourDLC_3D_scorername.h5`` is located. This can be done using:

+In order to visualize both the 2D videos with tracked points plut the pose in 3D, the user can create a 3D video for

+certain frames (these are large files, so we advise just looking at a subset of frames). The user can specify the config

+file, the **path of the triangulated file folder**, and specify the start and end frame indices to create a 3D labeled

+video. Note that the `triangulated_file_folder` is where the newly created file that ends with

+`yourDLC_3D_scorername.h5` is located. This can be done using:

```python

-deeplabcut.create_labeled_video_3d(config_path, ['triangulated_file_folder'], start=50, end=250)

+deeplabcut.create_labeled_video_3d(

+ config_path,

+ ["triangulated_file_folder"],

+ start=50,

+ end=250

+)

```

-**TIP:** (see more parameters below) You can set how the axis of the 3D plot on the far right looks by changing the variables ``xlim``, ``ylim``, ``zlim`` and ``view``. Your checkerboard_3d.png image which was created above will show you the axis ranges. Here is an example:

+**TIP:** (see more parameters below) You can set how the axis of the 3D plot on the far right looks by changing the

+variables `xlim`, `ylim`, `zlim` and `view`. Your checkerboard_3d.png image which was created above will show you the

+axis ranges. Here is an example:

@@ -213,22 +296,36 @@ The **triangulated file** is now saved under the same directory where the video

### (5) Visualize your 3D DeepLabCut Videos:

-In order to visualize both the 2D videos with tracked points plut the pose in 3D, the user can create a 3D video for certain frames (these are large files, so we advise just looking at a subset of frames). The user can specify the config file, the **path of the triangulated file folder**, and specify the start and end frame indices to create a 3D labeled video. Note that the ``triangulated_file_folder`` is where the newly created file that ends with ``yourDLC_3D_scorername.h5`` is located. This can be done using:

+In order to visualize both the 2D videos with tracked points plut the pose in 3D, the user can create a 3D video for

+certain frames (these are large files, so we advise just looking at a subset of frames). The user can specify the config

+file, the **path of the triangulated file folder**, and specify the start and end frame indices to create a 3D labeled

+video. Note that the `triangulated_file_folder` is where the newly created file that ends with

+`yourDLC_3D_scorername.h5` is located. This can be done using:

```python

-deeplabcut.create_labeled_video_3d(config_path, ['triangulated_file_folder'], start=50, end=250)

+deeplabcut.create_labeled_video_3d(

+ config_path,

+ ["triangulated_file_folder"],

+ start=50,

+ end=250

+)

```

-**TIP:** (see more parameters below) You can set how the axis of the 3D plot on the far right looks by changing the variables ``xlim``, ``ylim``, ``zlim`` and ``view``. Your checkerboard_3d.png image which was created above will show you the axis ranges. Here is an example:

+**TIP:** (see more parameters below) You can set how the axis of the 3D plot on the far right looks by changing the

+variables `xlim`, `ylim`, `zlim` and `view`. Your checkerboard_3d.png image which was created above will show you the

+axis ranges. Here is an example:

diff --git a/docs/beginner-guides/labeling.md b/docs/beginner-guides/labeling.md

index 7d944137a6..e5c7492722 100644

--- a/docs/beginner-guides/labeling.md

+++ b/docs/beginner-guides/labeling.md

@@ -1,3 +1,4 @@

+(labeling)=

# Labeling GUI

## Selecting Frames to Label

@@ -45,7 +46,9 @@ Alright, you've got your extracted frames ready. Now comes the labeling!

- **Navigate Through Frames:** Use the slider to go from one frame to the next after you're done labeling.

- **Save Progress:** Remember to save your work as you go with **`Command and S`** (or **`Ctrl and S`** on Windows).

-> 💡 **Note:** For a detailed walkthrough on using the Napari labeling GUI, have a look at the [DeepLabCut Napari Guide](https://deeplabcut.github.io/DeepLabCut/docs/napari_GUI.html). Additionally, you can watch our instructional [YouTube video](https://www.youtube.com/watch?v=hsA9IB5r73E) for more insights and tips.

+> 💡 **Note:** For a detailed walkthrough on using the Napari labeling GUI, have a look at the

+[DeepLabCut Napari Guide](napari-gui). Additionally, you can watch our instructional

+[YouTube video](https://www.youtube.com/watch?v=hsA9IB5r73E) for more insights and tips.

### Completing the Set

diff --git a/docs/beginner-guides/manage-project.md b/docs/beginner-guides/manage-project.md

index a53789360f..3f26589ae2 100644

--- a/docs/beginner-guides/manage-project.md

+++ b/docs/beginner-guides/manage-project.md

@@ -41,4 +41,4 @@ A **`Configuration Editor`** window will open, displaying all the configuration

- **Save the Configuration:** Once you're satisfied with the modifications, click **`Save`**. This will store your changes and return you to the main GUI window.

-## Next, head over the beginner guide for [Labeling your data](https://deeplabcut.github.io/DeepLabCut/docs/labelling)

+## Next, head over the beginner guide for [Labeling your data](labeling)

diff --git a/docs/beginner-guides/video-analysis.md b/docs/beginner-guides/video-analysis.md

index 59c976360d..849d8b6638 100644

--- a/docs/beginner-guides/video-analysis.md

+++ b/docs/beginner-guides/video-analysis.md

@@ -16,7 +16,7 @@ After training and evaluating your model, the next step is to apply it to your v

- **Find Results in Your Project Folder:** After analysis, go to your project's video folder.

- **Analysis Files:** Look also for a `.metapickle`, an `.h5`, and possibly a `.csv` file for detailed analysis data.

-- **Review the Plot Poses Subfolder:** This contains visual outputs of the video analysis.

+- **Review the "plot-poses" subfolder:** This contains visual outputs of the video analysis.

diff --git a/docs/convert_maDLC.md b/docs/convert_maDLC.md

index 6671afb1ef..19dd017692 100644

--- a/docs/convert_maDLC.md

+++ b/docs/convert_maDLC.md

@@ -1,10 +1,12 @@

(convert-maDLC)=

-# How to convert a pre-2.2 project for use with DeepLabCut 2.2

+# How to convert a pre-2.2 project for use with DeepLabCut 2.2 or later

diff --git a/docs/beginner-guides/labeling.md b/docs/beginner-guides/labeling.md

index 7d944137a6..e5c7492722 100644

--- a/docs/beginner-guides/labeling.md

+++ b/docs/beginner-guides/labeling.md

@@ -1,3 +1,4 @@

+(labeling)=

# Labeling GUI

## Selecting Frames to Label

@@ -45,7 +46,9 @@ Alright, you've got your extracted frames ready. Now comes the labeling!

- **Navigate Through Frames:** Use the slider to go from one frame to the next after you're done labeling.

- **Save Progress:** Remember to save your work as you go with **`Command and S`** (or **`Ctrl and S`** on Windows).

-> 💡 **Note:** For a detailed walkthrough on using the Napari labeling GUI, have a look at the [DeepLabCut Napari Guide](https://deeplabcut.github.io/DeepLabCut/docs/napari_GUI.html). Additionally, you can watch our instructional [YouTube video](https://www.youtube.com/watch?v=hsA9IB5r73E) for more insights and tips.

+> 💡 **Note:** For a detailed walkthrough on using the Napari labeling GUI, have a look at the

+[DeepLabCut Napari Guide](napari-gui). Additionally, you can watch our instructional

+[YouTube video](https://www.youtube.com/watch?v=hsA9IB5r73E) for more insights and tips.

### Completing the Set

diff --git a/docs/beginner-guides/manage-project.md b/docs/beginner-guides/manage-project.md

index a53789360f..3f26589ae2 100644

--- a/docs/beginner-guides/manage-project.md

+++ b/docs/beginner-guides/manage-project.md

@@ -41,4 +41,4 @@ A **`Configuration Editor`** window will open, displaying all the configuration

- **Save the Configuration:** Once you're satisfied with the modifications, click **`Save`**. This will store your changes and return you to the main GUI window.

-## Next, head over the beginner guide for [Labeling your data](https://deeplabcut.github.io/DeepLabCut/docs/labelling)

+## Next, head over the beginner guide for [Labeling your data](labeling)

diff --git a/docs/beginner-guides/video-analysis.md b/docs/beginner-guides/video-analysis.md

index 59c976360d..849d8b6638 100644

--- a/docs/beginner-guides/video-analysis.md

+++ b/docs/beginner-guides/video-analysis.md

@@ -16,7 +16,7 @@ After training and evaluating your model, the next step is to apply it to your v

- **Find Results in Your Project Folder:** After analysis, go to your project's video folder.

- **Analysis Files:** Look also for a `.metapickle`, an `.h5`, and possibly a `.csv` file for detailed analysis data.

-- **Review the Plot Poses Subfolder:** This contains visual outputs of the video analysis.

+- **Review the "plot-poses" subfolder:** This contains visual outputs of the video analysis.

diff --git a/docs/convert_maDLC.md b/docs/convert_maDLC.md

index 6671afb1ef..19dd017692 100644

--- a/docs/convert_maDLC.md

+++ b/docs/convert_maDLC.md

@@ -1,10 +1,12 @@

(convert-maDLC)=

-# How to convert a pre-2.2 project for use with DeepLabCut 2.2

+# How to convert a pre-2.2 project for use with DeepLabCut 2.2 or later

-If you have a pre-2.2 project (`labeled-data`) with a **single animal** that you want to use with a multianimal project in DLC 2.2, i.e. use your older data to now train the new multi-task deep neural network, here is what you need to do.

+If you have a pre-2.2 project (`labeled-data`) with a **single animal** that you want to use with a multianimal project

+in DLC 2.2 or later, i.e. use your older data to now train the new multi-task deep neural network, here is what you

+need to do.

(1) We recommend you make a back-up of your project folder.

@@ -14,7 +16,8 @@ If you have a pre-2.2 project (`labeled-data`) with a **single animal** that you

-If you have a pre-2.2 project (`labeled-data`) with a **single animal** that you want to use with a multianimal project in DLC 2.2, i.e. use your older data to now train the new multi-task deep neural network, here is what you need to do.

+If you have a pre-2.2 project (`labeled-data`) with a **single animal** that you want to use with a multianimal project

+in DLC 2.2 or later, i.e. use your older data to now train the new multi-task deep neural network, here is what you

+need to do.

(1) We recommend you make a back-up of your project folder.

@@ -14,7 +16,8 @@ If you have a pre-2.2 project (`labeled-data`) with a **single animal** that you

-- After `task, scorer, date, project_path` please add the following (i.e. in the image above, you would start adding below line 6) Note, the ordering isn't important but useful to keep consistent with the template:

+- After `task, scorer, date, project_path` please add the following (i.e. in the image above, you would start adding

+below line 6) Note, the ordering isn't important but useful to keep consistent with the template:

```python

multianimalproject: true

@@ -29,9 +32,12 @@ individuals:

- mouse1

```

-- `"uniquebodyparts: []` can stay blank, unless you have other items labeled you want to estimate (consider these as similar to bodyparts in pre-2.2); i.e. corners of a box, etc. All unique bodyparts should not be connected to the multianimal bodyparts in the skeleton you will eventually make. But see "advanced option" below.

+- `"uniquebodyparts: []` can stay blank, unless you have other items labeled you want to estimate (consider these as

+similar to bodyparts in pre-2.2); i.e. corners of a box, etc. All unique bodyparts should not be connected to the

+multianimal bodyparts in the skeleton you will eventually make. See "advanced option" below.

-- Please move your "bodyparts:" to "multianimalbodyparts:" (bodypart names must stay the same!) These are the parts that will always be interconnected fully!

+- Please move your "bodyparts:" to "multianimalbodyparts:" (bodypart names must stay the same!) These are the parts

+that will always be interconnected fully!

```python

multianimalbodyparts:

- snout

@@ -46,20 +52,25 @@ then you can set `bodyparts: MULTI!`

deeplabcut.convert2_maDLC(path_config_file, userfeedback=True)

```

-Now you will see that your data within `labeled-data` are converted to a new format, and the single animal format was saved for you under a new file named `CollectedData_ ...singleanimal.h5` and `.csv` as a back-up!

+Now you will see that your data within `labeled-data` are converted to a new format, and the single animal format was

+saved for you under a new file named `CollectedData_ ...singleanimal.h5` and `.csv` as a back-up!

-(4) We strongly recommend to first run check_labels and verify that the conversion was as expected before creating a multianimal training dataset. For instance, you can load this project `config.yaml` in the Project Manager GUI and check labels then create a multi-animal training set with

+(4) We strongly recommend to first run check_labels and verify that the conversion was as expected before creating a

+multianimal training dataset. For instance, you can load this project `config.yaml` in the Project Manager GUI and

+check labels then create a multi-animal training set with

```python

deeplabcut.create_multianimaltraining_dataset(path_config_file)

```

to begin training.

-**Advanced option:** You can also assign former `bodyparts` to either `uniquebodyparts` or `multianimalbodyparts` (you can even leave some unassigned, which means they will be dropped in the conversion).

+**Advanced option:** You can also assign former `bodyparts` to either `uniquebodyparts` or `multianimalbodyparts`

+(you can even leave some unassigned, which means they will be dropped in the conversion).

Example: Imagine you had a project with the moon and a rocket with two parts labeled:

`bodyparts: [moon, rocket_tip,rocket_bottom]`

-Now you want to use this former project (labeled-data) and work on a new dataset (videos) with one moon but multiple (3) rockets. Then convert it as follows:

+Now you want to use this former project (labeled-data) and work on a new dataset (videos) with one moon but multiple

+(3) rockets. Then convert it as follows:

```

individuals: [rocket1, rocket2, rocket3]

uniquebodyparts: [moon]

diff --git a/docs/course.md b/docs/course.md

index 2ad096995f..b71438cb4f 100644

--- a/docs/course.md

+++ b/docs/course.md

@@ -1,5 +1,10 @@

## DeepLabCut Self-paced Course

+::::{warning}

+This course was designed for DLC 2.

+An updated version for DLC 3 is in the works.

+::::

+

Do you have video of animal behaviors? Step 1: Get Poses ...

-- After `task, scorer, date, project_path` please add the following (i.e. in the image above, you would start adding below line 6) Note, the ordering isn't important but useful to keep consistent with the template:

+- After `task, scorer, date, project_path` please add the following (i.e. in the image above, you would start adding

+below line 6) Note, the ordering isn't important but useful to keep consistent with the template:

```python

multianimalproject: true

@@ -29,9 +32,12 @@ individuals:

- mouse1

```

-- `"uniquebodyparts: []` can stay blank, unless you have other items labeled you want to estimate (consider these as similar to bodyparts in pre-2.2); i.e. corners of a box, etc. All unique bodyparts should not be connected to the multianimal bodyparts in the skeleton you will eventually make. But see "advanced option" below.

+- `"uniquebodyparts: []` can stay blank, unless you have other items labeled you want to estimate (consider these as

+similar to bodyparts in pre-2.2); i.e. corners of a box, etc. All unique bodyparts should not be connected to the

+multianimal bodyparts in the skeleton you will eventually make. See "advanced option" below.

-- Please move your "bodyparts:" to "multianimalbodyparts:" (bodypart names must stay the same!) These are the parts that will always be interconnected fully!

+- Please move your "bodyparts:" to "multianimalbodyparts:" (bodypart names must stay the same!) These are the parts

+that will always be interconnected fully!

```python

multianimalbodyparts:

- snout

@@ -46,20 +52,25 @@ then you can set `bodyparts: MULTI!`

deeplabcut.convert2_maDLC(path_config_file, userfeedback=True)

```

-Now you will see that your data within `labeled-data` are converted to a new format, and the single animal format was saved for you under a new file named `CollectedData_ ...singleanimal.h5` and `.csv` as a back-up!

+Now you will see that your data within `labeled-data` are converted to a new format, and the single animal format was

+saved for you under a new file named `CollectedData_ ...singleanimal.h5` and `.csv` as a back-up!

-(4) We strongly recommend to first run check_labels and verify that the conversion was as expected before creating a multianimal training dataset. For instance, you can load this project `config.yaml` in the Project Manager GUI and check labels then create a multi-animal training set with

+(4) We strongly recommend to first run check_labels and verify that the conversion was as expected before creating a

+multianimal training dataset. For instance, you can load this project `config.yaml` in the Project Manager GUI and

+check labels then create a multi-animal training set with

```python

deeplabcut.create_multianimaltraining_dataset(path_config_file)

```

to begin training.

-**Advanced option:** You can also assign former `bodyparts` to either `uniquebodyparts` or `multianimalbodyparts` (you can even leave some unassigned, which means they will be dropped in the conversion).

+**Advanced option:** You can also assign former `bodyparts` to either `uniquebodyparts` or `multianimalbodyparts`

+(you can even leave some unassigned, which means they will be dropped in the conversion).

Example: Imagine you had a project with the moon and a rocket with two parts labeled:

`bodyparts: [moon, rocket_tip,rocket_bottom]`

-Now you want to use this former project (labeled-data) and work on a new dataset (videos) with one moon but multiple (3) rockets. Then convert it as follows:

+Now you want to use this former project (labeled-data) and work on a new dataset (videos) with one moon but multiple

+(3) rockets. Then convert it as follows:

```

individuals: [rocket1, rocket2, rocket3]

uniquebodyparts: [moon]

diff --git a/docs/course.md b/docs/course.md

index 2ad096995f..b71438cb4f 100644

--- a/docs/course.md

+++ b/docs/course.md

@@ -1,5 +1,10 @@

## DeepLabCut Self-paced Course

+::::{warning}

+This course was designed for DLC 2.

+An updated version for DLC 3 is in the works.

+::::

+

Do you have video of animal behaviors? Step 1: Get Poses ...

@@ -17,7 +22,7 @@ We expect it to take *roughly* 1-2 weeks to get through if you do it rigorously.

## Installation:

You need Python and DeepLabCut installed!

-- [See these "beginner docs" for help!](https://deeplabcut.github.io/DeepLabCut/docs/beginners-guide.html)

+- [See these "beginner docs" for help!](beginners-guide)

- **WATCH:** overview of conda: [Python Tutorial: Anaconda - Installation and Using Conda](https://www.youtube.com/watch?v=YJC6ldI3hWk)

@@ -32,10 +37,8 @@ You need Python and DeepLabCut installed!

- **Learning:** learning and teaching signal processing, and overview from Prof. Demba Ba [talk at JupyterCon](https://www.youtube.com/watch?v=ywz-LLYwkQQ)

-- **Learning:** Watch a talk from Alexander Mathis (a lead DeepLabCut developer) [talk about DeepLabCut!](https://www.youtube.com/watch?v=ZjWPHM0sL4E)

-

- **DEMO:** Can I DEMO DEEPLABCUT (DLC) quickly?

- - Yes: [you can click through this DEMO notebook](https://github.com/DeepLabCut/DeepLabCut/blob/master/examples/COLAB/COLAB_DEMO_mouse_openfield.ipynb)

+ - Yes: [you can click through this DEMO notebook](https://github.com/DeepLabCut/DeepLabCut/blob/main/examples/COLAB/COLAB_DEMO_mouse_openfield.ipynb)

- AND follow along with me: [Video Tutorial!](https://www.youtube.com/watch?v=DRT-Cq2vdWs)

@@ -55,9 +58,9 @@ You need Python and DeepLabCut installed!

**What you need:** any videos where you can see the animals/objects, etc.

You can use our demo videos, grab some from the internet, or use whatever older data you have. Any camera, color/monochrome, etc will work. Find diverse videos, and label what you want to track well :)

-- IF YOU ARE PART OF THE COURSE: you will be contributing to the DLC Model Zoo :smile:

+- IF YOU ARE PART OF THE COURSE: you will be contributing to the DLC Model Zoo 😊

- - **Slides:** [Overview of starting new projects](https://github.com/DeepLabCut/DeepLabCut-Workshop-Materials/blob/master/part1-labeling.pdf)

+ - **Slides:** [Overview of starting new projects](https://github.com/DeepLabCut/DeepLabCut-Workshop-Materials/blob/main/part1-labeling.pdf)

- **READ ME PLEASE:** [DeepLabCut, the science](https://rdcu.be/4Rep)

- **READ ME PLEASE:** [DeepLabCut, the user guide](https://rdcu.be/bHpHN)

- **WATCH:** Video tutorial 1: [using the Project Manager GUI](https://www.youtube.com/watch?v=KcXogR-p5Ak)

@@ -71,12 +74,12 @@ You can use our demo videos, grab some from the internet, or use whatever older

### **Module 2: Neural Networks**

- - **Slides:** [Overview of creating training and test data, and training networks](https://github.com/DeepLabCut/DeepLabCut-Workshop-Materials/blob/master/part2-network.pdf)

+ - **Slides:** [Overview of creating training and test data, and training networks](https://github.com/DeepLabCut/DeepLabCut-Workshop-Materials/blob/main/part2-network.pdf)

- **READ ME PLEASE:** [What are convolutional neural networks?](https://towardsdatascience.com/a-comprehensive-guide-to-convolutional-neural-networks-the-eli5-way-3bd2b1164a53)

- **READ ME PLEASE:** Here is a new paper from us describing challenges in robust pose estimation, why PRE-TRAINING really matters - which was our major scientific contribution to low-data input pose-estimation - and it describes new networks that are available to you. [Pretraining boosts out-of-domain robustness for pose estimation](https://paperswithcode.com/paper/pretraining-boosts-out-of-domain-robustness)

- - **MORE DETAILS:** ImageNet: check out the original paper and dataset: http://www.image-net.org/ (link to [ppt from Dr. Fei-Fei Li](http://www.image-net.org/papers/ImageNet_2010.ppt))

+ - **MORE DETAILS:** ImageNet: check out the original paper and dataset: http://www.image-net.org/

- **REVIEW PAPER:** [A Primer on Motion Capture with Deep Learning: Principles, Pitfalls and Perspectives](https://www.sciencedirect.com/science/article/pii/S0896627320307170)

@@ -84,18 +87,18 @@ You can use our demo videos, grab some from the internet, or use whatever older

@@ -17,7 +22,7 @@ We expect it to take *roughly* 1-2 weeks to get through if you do it rigorously.

## Installation:

You need Python and DeepLabCut installed!

-- [See these "beginner docs" for help!](https://deeplabcut.github.io/DeepLabCut/docs/beginners-guide.html)

+- [See these "beginner docs" for help!](beginners-guide)

- **WATCH:** overview of conda: [Python Tutorial: Anaconda - Installation and Using Conda](https://www.youtube.com/watch?v=YJC6ldI3hWk)

@@ -32,10 +37,8 @@ You need Python and DeepLabCut installed!

- **Learning:** learning and teaching signal processing, and overview from Prof. Demba Ba [talk at JupyterCon](https://www.youtube.com/watch?v=ywz-LLYwkQQ)

-- **Learning:** Watch a talk from Alexander Mathis (a lead DeepLabCut developer) [talk about DeepLabCut!](https://www.youtube.com/watch?v=ZjWPHM0sL4E)

-

- **DEMO:** Can I DEMO DEEPLABCUT (DLC) quickly?

- - Yes: [you can click through this DEMO notebook](https://github.com/DeepLabCut/DeepLabCut/blob/master/examples/COLAB/COLAB_DEMO_mouse_openfield.ipynb)

+ - Yes: [you can click through this DEMO notebook](https://github.com/DeepLabCut/DeepLabCut/blob/main/examples/COLAB/COLAB_DEMO_mouse_openfield.ipynb)

- AND follow along with me: [Video Tutorial!](https://www.youtube.com/watch?v=DRT-Cq2vdWs)

@@ -55,9 +58,9 @@ You need Python and DeepLabCut installed!

**What you need:** any videos where you can see the animals/objects, etc.

You can use our demo videos, grab some from the internet, or use whatever older data you have. Any camera, color/monochrome, etc will work. Find diverse videos, and label what you want to track well :)

-- IF YOU ARE PART OF THE COURSE: you will be contributing to the DLC Model Zoo :smile:

+- IF YOU ARE PART OF THE COURSE: you will be contributing to the DLC Model Zoo 😊

- - **Slides:** [Overview of starting new projects](https://github.com/DeepLabCut/DeepLabCut-Workshop-Materials/blob/master/part1-labeling.pdf)

+ - **Slides:** [Overview of starting new projects](https://github.com/DeepLabCut/DeepLabCut-Workshop-Materials/blob/main/part1-labeling.pdf)

- **READ ME PLEASE:** [DeepLabCut, the science](https://rdcu.be/4Rep)

- **READ ME PLEASE:** [DeepLabCut, the user guide](https://rdcu.be/bHpHN)

- **WATCH:** Video tutorial 1: [using the Project Manager GUI](https://www.youtube.com/watch?v=KcXogR-p5Ak)

@@ -71,12 +74,12 @@ You can use our demo videos, grab some from the internet, or use whatever older

### **Module 2: Neural Networks**

- - **Slides:** [Overview of creating training and test data, and training networks](https://github.com/DeepLabCut/DeepLabCut-Workshop-Materials/blob/master/part2-network.pdf)

+ - **Slides:** [Overview of creating training and test data, and training networks](https://github.com/DeepLabCut/DeepLabCut-Workshop-Materials/blob/main/part2-network.pdf)

- **READ ME PLEASE:** [What are convolutional neural networks?](https://towardsdatascience.com/a-comprehensive-guide-to-convolutional-neural-networks-the-eli5-way-3bd2b1164a53)

- **READ ME PLEASE:** Here is a new paper from us describing challenges in robust pose estimation, why PRE-TRAINING really matters - which was our major scientific contribution to low-data input pose-estimation - and it describes new networks that are available to you. [Pretraining boosts out-of-domain robustness for pose estimation](https://paperswithcode.com/paper/pretraining-boosts-out-of-domain-robustness)

- - **MORE DETAILS:** ImageNet: check out the original paper and dataset: http://www.image-net.org/ (link to [ppt from Dr. Fei-Fei Li](http://www.image-net.org/papers/ImageNet_2010.ppt))

+ - **MORE DETAILS:** ImageNet: check out the original paper and dataset: http://www.image-net.org/

- **REVIEW PAPER:** [A Primer on Motion Capture with Deep Learning: Principles, Pitfalls and Perspectives](https://www.sciencedirect.com/science/article/pii/S0896627320307170)

@@ -84,18 +87,18 @@ You can use our demo videos, grab some from the internet, or use whatever older

Before you create a training/test set, please read/watch:

- - **More information:** [Which types neural networks are available, and what should I use?](https://github.com/AlexEMG/DeepLabCut/wiki/What-neural-network-should-I-use%3F)

+ - **More information:** [Which types neural networks are available, and what should I use?](https://github.com/DeepLabCut/DeepLabCut/wiki/What-neural-network-should-I-use%3F-(Trade-offs,-speed-performance,-and-considerations))

- **WATCH:** Video tutorial 1: [How to test different networks in a controlled way](https://www.youtube.com/watch?v=WXCVr6xAcCA)

- Now, decide what model(s) you want to test.

- IF you want to train on your CPU, then run the step `create_training_dataset`, in the GUI etc. on your own computer.

- - IF you want to use GPUs on google colab, [**(1)** watch this FIRST/follow along here!](https://www.youtube.com/watch?v=qJGs8nxx80A) **(2)** move your whole project folder to Google Drive, and then [**use this notebook**](https://github.com/DeepLabCut/DeepLabCut/blob/master/examples/COLAB/COLAB_YOURDATA_TrainNetwork_VideoAnalysis.ipynb)

+ - IF you want to use GPUs on google colab, [**(1)** watch this FIRST/follow along here!](https://www.youtube.com/watch?v=qJGs8nxx80A) **(2)** move your whole project folder to Google Drive, and then [**use this notebook**](https://github.com/DeepLabCut/DeepLabCut/blob/main/examples/COLAB/COLAB_YOURDATA_TrainNetwork_VideoAnalysis.ipynb)

**MODULE 2 webinar**: https://youtu.be/ILsuC4icBU0

### **Module 3: Evalution of network performance**

- - **Slides** [Evalute your network](https://github.com/DeepLabCut/DeepLabCut-Workshop-Materials/blob/master/part3-analysis.pdf)

+ - **Slides** [Evalute your network](https://github.com/DeepLabCut/DeepLabCut-Workshop-Materials/blob/main/part3-analysis.pdf)

- **WATCH:** [Evaluate the network in ipython](https://www.youtube.com/watch?v=bgfnz1wtlpo)

- why evaluation matters; how to benchmark; analyzing a video and using scoremaps, conf. readouts, etc.

diff --git a/docs/deeplabcutlive.md b/docs/deeplabcutlive.md

index f8784b651d..f1edbff3cc 100644

--- a/docs/deeplabcutlive.md

+++ b/docs/deeplabcutlive.md

@@ -1,3 +1,4 @@

+(deeplabcut-live)=

# DeepLabCut-Live!

We provide two additional pip packages that allow you to record and stream camera data and run DeeplabCut models in real-time.

diff --git a/docs/gui/PROJECT_GUI.md b/docs/gui/PROJECT_GUI.md

index 106e7eca5c..e0883c324e 100644

--- a/docs/gui/PROJECT_GUI.md

+++ b/docs/gui/PROJECT_GUI.md

@@ -10,7 +10,7 @@ As some users may be more comfortable working with an interactive interface, we

(1) Install DeepLabCut using the simple-install with Anaconda found [here!](how-to-install)*.

Now you have DeepLabCut installed, but if you want to update it, either follow the prompt in the GUI which will ask you to upgrade when a new version is available, or just go into your env (activate DEEPLABCUT) then run:

-` pip install 'deeplabcut[gui,modelzoo]'` *but please see [full install guide](https://deeplabcut.github.io/DeepLabCut/docs/installation.html)!

+` pip install 'deeplabcut[gui,modelzoo]'` *but please see [full install guide](how-to-install)!

(2) Open the terminal and run: `python -m deeplabcut`

@@ -23,15 +23,14 @@ Now you have DeepLabCut installed, but if you want to update it, either follow t

Start at the Project Management Tab and work your way through the tabs to built your customized model and deploy it on new data.

We recommend to keep the terminal visible (as well as the GUI) so you can see the ongoing processes as you step through your project, or any errors that might arise.

-- For specific napari-based labeling features, see the ["napari gui" docs](https://deeplabcut.github.io/DeepLabCut/docs/napari_GUI.html#usage).

+- For specific napari-based labeling features, see the ["napari gui" docs](napari-gui-usage).

- To change from dark to light mode, set appearance at the top:

Before you create a training/test set, please read/watch:

- - **More information:** [Which types neural networks are available, and what should I use?](https://github.com/AlexEMG/DeepLabCut/wiki/What-neural-network-should-I-use%3F)

+ - **More information:** [Which types neural networks are available, and what should I use?](https://github.com/DeepLabCut/DeepLabCut/wiki/What-neural-network-should-I-use%3F-(Trade-offs,-speed-performance,-and-considerations))

- **WATCH:** Video tutorial 1: [How to test different networks in a controlled way](https://www.youtube.com/watch?v=WXCVr6xAcCA)

- Now, decide what model(s) you want to test.

- IF you want to train on your CPU, then run the step `create_training_dataset`, in the GUI etc. on your own computer.

- - IF you want to use GPUs on google colab, [**(1)** watch this FIRST/follow along here!](https://www.youtube.com/watch?v=qJGs8nxx80A) **(2)** move your whole project folder to Google Drive, and then [**use this notebook**](https://github.com/DeepLabCut/DeepLabCut/blob/master/examples/COLAB/COLAB_YOURDATA_TrainNetwork_VideoAnalysis.ipynb)

+ - IF you want to use GPUs on google colab, [**(1)** watch this FIRST/follow along here!](https://www.youtube.com/watch?v=qJGs8nxx80A) **(2)** move your whole project folder to Google Drive, and then [**use this notebook**](https://github.com/DeepLabCut/DeepLabCut/blob/main/examples/COLAB/COLAB_YOURDATA_TrainNetwork_VideoAnalysis.ipynb)

**MODULE 2 webinar**: https://youtu.be/ILsuC4icBU0

### **Module 3: Evalution of network performance**

- - **Slides** [Evalute your network](https://github.com/DeepLabCut/DeepLabCut-Workshop-Materials/blob/master/part3-analysis.pdf)

+ - **Slides** [Evalute your network](https://github.com/DeepLabCut/DeepLabCut-Workshop-Materials/blob/main/part3-analysis.pdf)

- **WATCH:** [Evaluate the network in ipython](https://www.youtube.com/watch?v=bgfnz1wtlpo)

- why evaluation matters; how to benchmark; analyzing a video and using scoremaps, conf. readouts, etc.

diff --git a/docs/deeplabcutlive.md b/docs/deeplabcutlive.md

index f8784b651d..f1edbff3cc 100644

--- a/docs/deeplabcutlive.md

+++ b/docs/deeplabcutlive.md

@@ -1,3 +1,4 @@

+(deeplabcut-live)=

# DeepLabCut-Live!

We provide two additional pip packages that allow you to record and stream camera data and run DeeplabCut models in real-time.

diff --git a/docs/gui/PROJECT_GUI.md b/docs/gui/PROJECT_GUI.md

index 106e7eca5c..e0883c324e 100644

--- a/docs/gui/PROJECT_GUI.md

+++ b/docs/gui/PROJECT_GUI.md

@@ -10,7 +10,7 @@ As some users may be more comfortable working with an interactive interface, we

(1) Install DeepLabCut using the simple-install with Anaconda found [here!](how-to-install)*.

Now you have DeepLabCut installed, but if you want to update it, either follow the prompt in the GUI which will ask you to upgrade when a new version is available, or just go into your env (activate DEEPLABCUT) then run:

-` pip install 'deeplabcut[gui,modelzoo]'` *but please see [full install guide](https://deeplabcut.github.io/DeepLabCut/docs/installation.html)!

+` pip install 'deeplabcut[gui,modelzoo]'` *but please see [full install guide](how-to-install)!

(2) Open the terminal and run: `python -m deeplabcut`

@@ -23,15 +23,14 @@ Now you have DeepLabCut installed, but if you want to update it, either follow t

Start at the Project Management Tab and work your way through the tabs to built your customized model and deploy it on new data.

We recommend to keep the terminal visible (as well as the GUI) so you can see the ongoing processes as you step through your project, or any errors that might arise.

-- For specific napari-based labeling features, see the ["napari gui" docs](https://deeplabcut.github.io/DeepLabCut/docs/napari_GUI.html#usage).

+- For specific napari-based labeling features, see the ["napari gui" docs](napari-gui-usage).

- To change from dark to light mode, set appearance at the top:

+

+ ```{Hint}

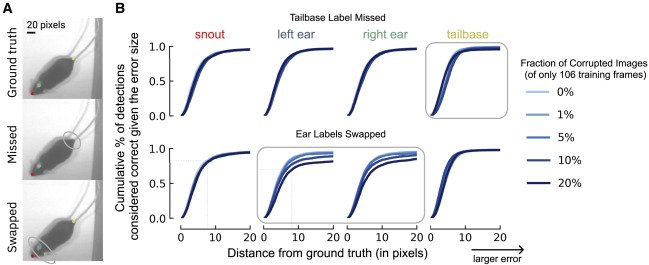

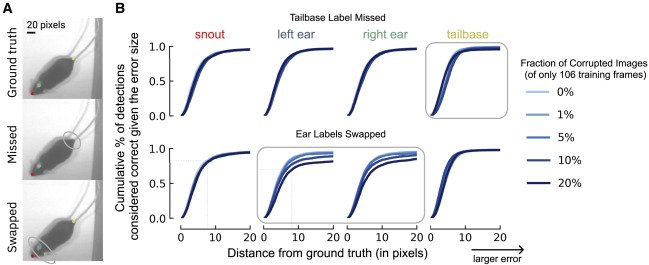

**Labeling Pitfalls: How Corruptions Affect Performance**

-(A) Illustration of two types of labeling errors. Top is ground truth, middle is missing a label at the tailbase, and bottom is if the labeler swapped the ear identity (left to right, etc.). (B) Using a small training dataset of 106 frames, how do the corruptions in (A) affect the percent of correct keypoints (PCK) on the test set as the distance to ground truth increases from 0 pixels (perfect prediction) to 20 pixels (larger error)? The x axis denotes the difference in the ground truth to the predicted location (RMSE in pixels), whereas the y axis is the fraction of frames considered accurate (e.g., z80% of frames fall within 9 pixels, even on this small training dataset, for points that are not corrupted, whereas for swapped points this falls to z65%). The fraction of the dataset that is corrupted affects this value. Shown is when missing the tailbase label (top) or swapping the ears in 1%, 5%, 10%, and 20% of frames (of 106 labeled training images). Swapping versus missing labels has a more notable adverse effect on network performance.

+(A) Illustration of two types of labeling errors. Top is ground truth, middle is missing a label at the tailbase, and

+bottom is if the labeler swapped the ear identity (left to right, etc.). (B) Using a small training dataset of 106

+frames, how do the corruptions in (A) affect the percent of correct keypoints (PCK) on the test set as the distance

+to ground truth increases from 0 pixels (perfect prediction) to 20 pixels (larger error)? The x axis denotes the

+difference in the ground truth to the predicted location (RMSE in pixels), whereas the y axis is the fraction of

+frames considered accurate (e.g., z80% of frames fall within 9 pixels, even on this small training dataset, for

+points that are not corrupted, whereas for swapped points this falls to z65%). The fraction of the dataset that is

+corrupted affects this value. Shown is when missing the tailbase label (top) or swapping the ears in 1%, 5%, 10%,

+and 20% of frames (of 106 labeled training images). Swapping versus missing labels has a more notable adverse effect

+on network performance.

```

-The DeepLabCut toolbox supports **active learning** by extracting outlier frames be several methods and allowing the user to correct the frames, then retrain the model. See the [Nature Protocols paper](https://www.nature.com/articles/s41596-019-0176-0) for the detailed steps, or in the docs, [here](https://deeplabcut.github.io/DeepLabCut/docs/standardDeepLabCut_UserGuide.html#m-optional-active-learning-network-refinement-extract-outlier-frames).

+The DeepLabCut toolbox supports **active learning** by extracting outlier frames be several methods and allowing the

+user to correct the frames, then retrain the model. See the

+[Nature Protocols paper](https://www.nature.com/articles/s41596-019-0176-0) for the detailed steps, or in the docs,

+[here](active-learning).

-To facilitate this process, here we propose a new way to detect 'outlier frames', which is planned to be released in ~Sept 2022. Your contributions and suggestions are welcomed, so test the [PR](https://github.com/DeepLabCut/napari-deeplabcut/pull/38) and give us feedback!

+To facilitate this process, here we propose a new way to detect 'outlier frames'.

+Your contributions and suggestions are welcomed, so test the

+[PR](https://github.com/DeepLabCut/napari-deeplabcut/pull/38) and give us feedback!

-This #cookbook recipe aims to show a usecase of **clustering in napari** and is contributed by 2022 DLC AI Resident [Sabrina Benas](https://twitter.com/Sabrineiitor) 💜.

+This #cookbook recipe aims to show a usecase of **clustering in napari** and is contributed by 2022 DLC AI Resident

+[Sabrina Benas](https://twitter.com/Sabrineiitor) 💜.

## Detect Outliers to Refine Labels

### Open `napari` and the `DeepLabCut plugin`

- - Then open your `CollectedData_

```{Hint}

**Labeling Pitfalls: How Corruptions Affect Performance**

-(A) Illustration of two types of labeling errors. Top is ground truth, middle is missing a label at the tailbase, and bottom is if the labeler swapped the ear identity (left to right, etc.). (B) Using a small training dataset of 106 frames, how do the corruptions in (A) affect the percent of correct keypoints (PCK) on the test set as the distance to ground truth increases from 0 pixels (perfect prediction) to 20 pixels (larger error)? The x axis denotes the difference in the ground truth to the predicted location (RMSE in pixels), whereas the y axis is the fraction of frames considered accurate (e.g., z80% of frames fall within 9 pixels, even on this small training dataset, for points that are not corrupted, whereas for swapped points this falls to z65%). The fraction of the dataset that is corrupted affects this value. Shown is when missing the tailbase label (top) or swapping the ears in 1%, 5%, 10%, and 20% of frames (of 106 labeled training images). Swapping versus missing labels has a more notable adverse effect on network performance.

+(A) Illustration of two types of labeling errors. Top is ground truth, middle is missing a label at the tailbase, and

+bottom is if the labeler swapped the ear identity (left to right, etc.). (B) Using a small training dataset of 106

+frames, how do the corruptions in (A) affect the percent of correct keypoints (PCK) on the test set as the distance

+to ground truth increases from 0 pixels (perfect prediction) to 20 pixels (larger error)? The x axis denotes the

+difference in the ground truth to the predicted location (RMSE in pixels), whereas the y axis is the fraction of

+frames considered accurate (e.g., z80% of frames fall within 9 pixels, even on this small training dataset, for

+points that are not corrupted, whereas for swapped points this falls to z65%). The fraction of the dataset that is

+corrupted affects this value. Shown is when missing the tailbase label (top) or swapping the ears in 1%, 5%, 10%,

+and 20% of frames (of 106 labeled training images). Swapping versus missing labels has a more notable adverse effect

+on network performance.

```

-The DeepLabCut toolbox supports **active learning** by extracting outlier frames be several methods and allowing the user to correct the frames, then retrain the model. See the [Nature Protocols paper](https://www.nature.com/articles/s41596-019-0176-0) for the detailed steps, or in the docs, [here](https://deeplabcut.github.io/DeepLabCut/docs/standardDeepLabCut_UserGuide.html#m-optional-active-learning-network-refinement-extract-outlier-frames).

+The DeepLabCut toolbox supports **active learning** by extracting outlier frames be several methods and allowing the

+user to correct the frames, then retrain the model. See the

+[Nature Protocols paper](https://www.nature.com/articles/s41596-019-0176-0) for the detailed steps, or in the docs,

+[here](active-learning).

-To facilitate this process, here we propose a new way to detect 'outlier frames', which is planned to be released in ~Sept 2022. Your contributions and suggestions are welcomed, so test the [PR](https://github.com/DeepLabCut/napari-deeplabcut/pull/38) and give us feedback!

+To facilitate this process, here we propose a new way to detect 'outlier frames'.

+Your contributions and suggestions are welcomed, so test the

+[PR](https://github.com/DeepLabCut/napari-deeplabcut/pull/38) and give us feedback!

-This #cookbook recipe aims to show a usecase of **clustering in napari** and is contributed by 2022 DLC AI Resident [Sabrina Benas](https://twitter.com/Sabrineiitor) 💜.

+This #cookbook recipe aims to show a usecase of **clustering in napari** and is contributed by 2022 DLC AI Resident

+[Sabrina Benas](https://twitter.com/Sabrineiitor) 💜.

## Detect Outliers to Refine Labels

### Open `napari` and the `DeepLabCut plugin`

- - Then open your `CollectedData_ +

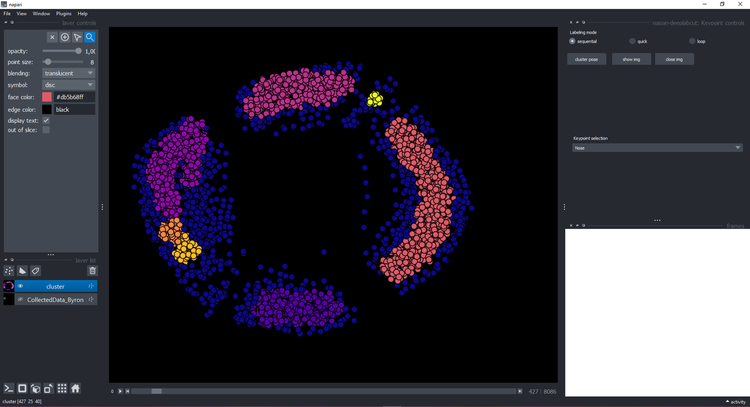

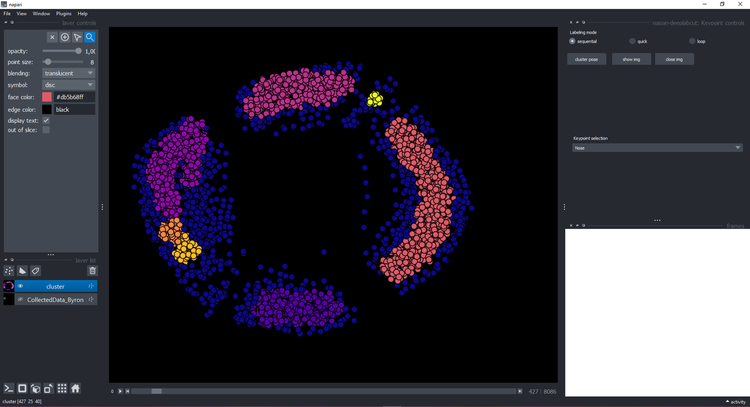

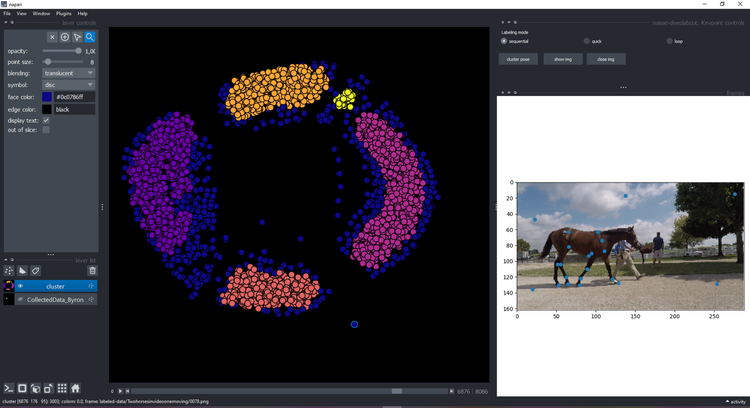

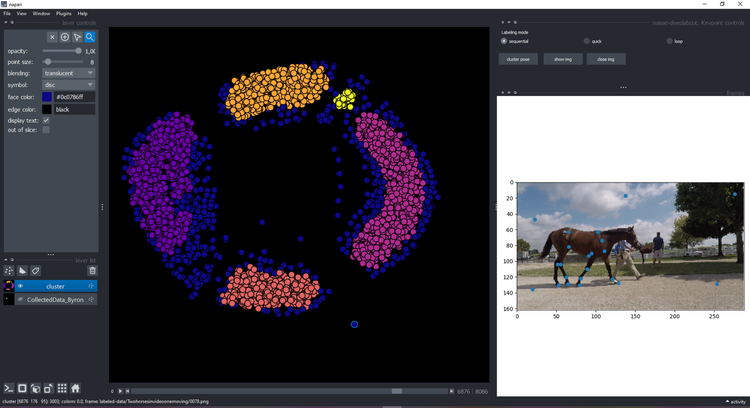

+ ### Clustering

-- Click on the button `cluster` and wait a few seconds until it displays a new layer with the cluster:

+Click on the button `cluster` and wait a few seconds until it displays a new layer with the cluster:

-

### Clustering

-- Click on the button `cluster` and wait a few seconds until it displays a new layer with the cluster:

+Click on the button `cluster` and wait a few seconds until it displays a new layer with the cluster:

-  +

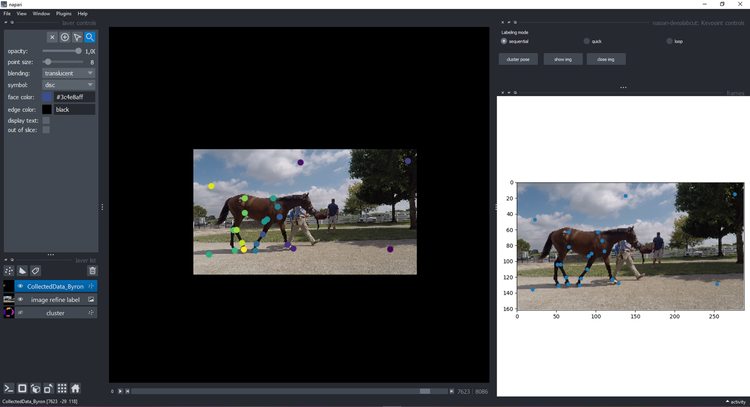

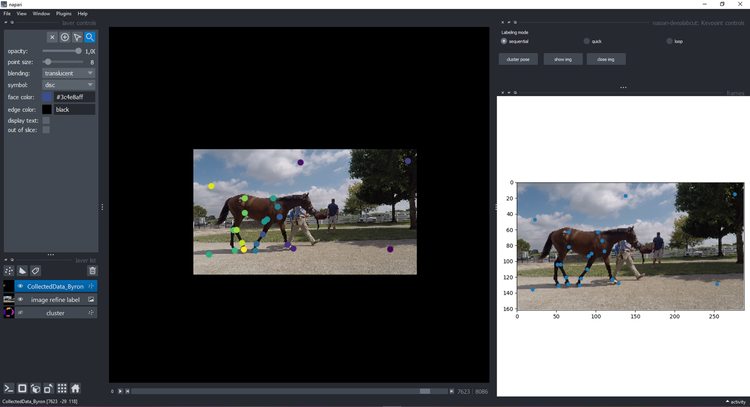

+ You can click on a point and see the image on the right with the keypoints:

-

You can click on a point and see the image on the right with the keypoints:

-  +

+ ### Visualize & refine

-If you decided to refine that frame (we moved the points to make outliers obvious), click `show img` and refine them using the plugin features and instructions:

+If you decided to refine that frame (we moved the points to make outliers obvious), click `show img` and refine them

+using the plugin features and instructions:

-

### Visualize & refine

-If you decided to refine that frame (we moved the points to make outliers obvious), click `show img` and refine them using the plugin features and instructions:

+If you decided to refine that frame (we moved the points to make outliers obvious), click `show img` and refine them

+using the plugin features and instructions:

-  +

+ - ```{Attention}

- When you're done, you need to click `ctl-s` to save it.

+```{Attention}

+When you're done, you need to click `ctl-s` to save it.

```

-- You can go back to the cluster layer by clicking on `close img` and refine another image. Reminder, when you're done editing you need to click `ctl-s` to save your work. And now you can take the updated `CollectedData` file, create and **new training shuffle**, and train the network! Read more about how to [create a training dataset](https://deeplabcut.github.io/DeepLabCut/docs/standardDeepLabCut_UserGuide.html#f-create-training-dataset-s).

+You can go back to the cluster layer by clicking on `close img` and refine another image. Reminder, when you're done

+editing you need to click `ctl-s` to save your work. And now you can take the updated `CollectedData` file, create

+and **new training shuffle**, and train the network! Read more about how to

+[create a training dataset](create-training-dataset).

```{hint}

-If you want to change the clustering method, you can modify the file [kmeans.py](https://github.com/DeepLabCutAIResidency/napari-deeplabcut/blob/cluster1/src/napari_deeplabcut/kmeans.py)

+If you want to change the clustering method, you can modify the file

+[kmeans.py](https://github.com/DeepLabCutAIResidency/napari-deeplabcut/blob/cluster1/src/napari_deeplabcut/kmeans.py)

+```

::::{important}

-You have to keep the way the file is opened (pandas dataframe) and the output has to be the cluster points, the points colors in the cluster colors and the frame names (in this order).

+You have to keep the way the file is opened (pandas dataframe) and the output has to be the cluster points, the points

+colors in the cluster colors and the frame names (in this order).

::::

```

diff --git a/docs/recipes/DLCMethods.md b/docs/recipes/DLCMethods.md

index b2f7a5a7af..74d5ec4c65 100644

--- a/docs/recipes/DLCMethods.md

+++ b/docs/recipes/DLCMethods.md

@@ -2,7 +2,13 @@

**Pose estimation using DeepLabCut**

-For body part tracking we used DeepLabCut (version 2.X.X) [Mathis et al, 2018, Nath et al, 2019]. Specifically, we labeled X number of frames taken from X videos/animals (then X% was used for training (default is 95%). We used a X-based neural network (i.e., X = ResNet-50, ResNet-101, MobileNetV2-0.35, MobileNetV2-0.5, MobileNetV2-0.75, MobileNetV2-1, EfficientNet ..X, dlcrnet_ms5, etc.)*** with default parameters* for X number of training iterations. We validated with X number of shuffles, and found the test error was: X pixels, train: X pixels (image size was X by X). We then used a p-cutoff of X (i.e. 0.9) to condition the X,Y coordinates for future analysis. This network was then used to analyze videos from similar experimental settings.

+For body part tracking we used DeepLabCut (version 3.X.X) [Mathis et al, 2018, Nath et al, 2019]. Specifically, we

+labeled X number of frames taken from X videos/animals (then X% was used for training (default is 95%). We used a

+X-based neural network (i.e., X = ResNet-50, ResNet-101, MobileNetV2-0.35, MobileNetV2-0.5, MobileNetV2-0.75,

+MobileNetV2-1, EfficientNet ..X, dlcrnet_ms5, cspnext_s, dekr_w32, rtmpose_s, etc.)*** with default parameters* for X

+number of training iterations. We validated with X number of shuffles, and found the test error was: X pixels, train:

+X pixels (image size was X by X). We then used a p-cutoff of X (i.e. 0.9) to condition the X,Y coordinates for future

+analysis. This network was then used to analyze videos from similar experimental settings.

*If any defaults were changed in *`pose_config.yaml`*, mention them.

@@ -43,4 +49,5 @@ If you use ResNets, consider citing Insafutdinov et al 2016 & He et al 2016. If

> 770–778 (2016). URL https://arxiv.org/abs/

> 1512.03385.

-We also have the network graphic freely available on SciDraw.io if you'd like to use it! https://scidraw.io/drawing/290. If you use our DLC logo, please include the TM symbol, thank you!

+We also have the network graphic freely available on SciDraw.io if you'd like to use it! https://scidraw.io/drawing/290.

+If you use our DLC logo, please include the TM symbol, thank you!

diff --git a/docs/recipes/MegaDetectorDLCLive.md b/docs/recipes/MegaDetectorDLCLive.md

index 20e35a38fc..8af6f59d1b 100644

--- a/docs/recipes/MegaDetectorDLCLive.md

+++ b/docs/recipes/MegaDetectorDLCLive.md

@@ -14,7 +14,9 @@ MegaDetector detects an animal and generates a bounding box around the animal. T

## DeepLabCut-Live

-DeepLabCut-Live! is a real-time package for running DeepLabCut. However, you can also use it as a lighter-weight package for running DeeplabCut even if you don't need real-time. It's very useful to use in HPC or servers, or in Apps, as we do here. To read more, check out the [docs](https://deeplabcut.github.io/DeepLabCut/docs/deeplabcutlive.html).

+DeepLabCut-Live! is a real-time package for running DeepLabCut. However, you can also use it as a lighter-weight

+package for running DeeplabCut even if you don't need real-time. It's very useful to use in HPC or servers, or in Apps,

+as we do here. To read more, check out the [docs](deeplabcut-live).

### MegaDetector meets DeepLabCut

diff --git a/docs/recipes/OpenVINO.md b/docs/recipes/OpenVINO.md

index 2033045ce8..78ea18e82f 100644

--- a/docs/recipes/OpenVINO.md

+++ b/docs/recipes/OpenVINO.md

@@ -1,10 +1,15 @@

# Intel OpenVINO backend

+::::{warning}

+This feature is currently implemented for TensorFlow-based models only.

+::::

+

DeepLabCut provides an option to run deep learning model with [OpenVINO](https://github.com/openvinotoolkit/openvino) backend.

-To enable OpenVINO in your pipeline, use `use_openvino` flag of `analyze_videos` method with one of string values indicating device:

-* "CPU" - Use CPU. This is a default value.

-* "GPU" - Use iGPU (requires OpenCL to be installed). First launch might take some time for kernels initialization.

-* "MULTI:CPU,GPU" - Use CPU and GPU simultaneously. In most cases this option provides the best efficiency.

+To enable OpenVINO in your pipeline, use `use_openvino` flag of `analyze_videos` method with one of string values

+indicating device:

+* ```"CPU"``` - Use CPU. This is a default value.

+* ```"GPU"``` - Use GPU (requires OpenCL to be installed). First launch might take some time for kernels initialization.

+* ```"MULTI:CPU,GPU"``` - Use CPU and GPU simultaneously. In most cases this option provides the best efficiency.

```python

def analyze_videos(

diff --git a/docs/recipes/UsingModelZooPupil.md b/docs/recipes/UsingModelZooPupil.md

index 2aac4a4d00..7ea4107d75 100644

--- a/docs/recipes/UsingModelZooPupil.md

+++ b/docs/recipes/UsingModelZooPupil.md

@@ -1,17 +1,25 @@

# Using ModelZoo models on your own datasets

-

- ```{Attention}

- When you're done, you need to click `ctl-s` to save it.

+```{Attention}

+When you're done, you need to click `ctl-s` to save it.

```

-- You can go back to the cluster layer by clicking on `close img` and refine another image. Reminder, when you're done editing you need to click `ctl-s` to save your work. And now you can take the updated `CollectedData` file, create and **new training shuffle**, and train the network! Read more about how to [create a training dataset](https://deeplabcut.github.io/DeepLabCut/docs/standardDeepLabCut_UserGuide.html#f-create-training-dataset-s).

+You can go back to the cluster layer by clicking on `close img` and refine another image. Reminder, when you're done

+editing you need to click `ctl-s` to save your work. And now you can take the updated `CollectedData` file, create

+and **new training shuffle**, and train the network! Read more about how to

+[create a training dataset](create-training-dataset).

```{hint}

-If you want to change the clustering method, you can modify the file [kmeans.py](https://github.com/DeepLabCutAIResidency/napari-deeplabcut/blob/cluster1/src/napari_deeplabcut/kmeans.py)

+If you want to change the clustering method, you can modify the file

+[kmeans.py](https://github.com/DeepLabCutAIResidency/napari-deeplabcut/blob/cluster1/src/napari_deeplabcut/kmeans.py)

+```

::::{important}

-You have to keep the way the file is opened (pandas dataframe) and the output has to be the cluster points, the points colors in the cluster colors and the frame names (in this order).

+You have to keep the way the file is opened (pandas dataframe) and the output has to be the cluster points, the points

+colors in the cluster colors and the frame names (in this order).

::::

```

diff --git a/docs/recipes/DLCMethods.md b/docs/recipes/DLCMethods.md

index b2f7a5a7af..74d5ec4c65 100644

--- a/docs/recipes/DLCMethods.md

+++ b/docs/recipes/DLCMethods.md

@@ -2,7 +2,13 @@

**Pose estimation using DeepLabCut**

-For body part tracking we used DeepLabCut (version 2.X.X) [Mathis et al, 2018, Nath et al, 2019]. Specifically, we labeled X number of frames taken from X videos/animals (then X% was used for training (default is 95%). We used a X-based neural network (i.e., X = ResNet-50, ResNet-101, MobileNetV2-0.35, MobileNetV2-0.5, MobileNetV2-0.75, MobileNetV2-1, EfficientNet ..X, dlcrnet_ms5, etc.)*** with default parameters* for X number of training iterations. We validated with X number of shuffles, and found the test error was: X pixels, train: X pixels (image size was X by X). We then used a p-cutoff of X (i.e. 0.9) to condition the X,Y coordinates for future analysis. This network was then used to analyze videos from similar experimental settings.

+For body part tracking we used DeepLabCut (version 3.X.X) [Mathis et al, 2018, Nath et al, 2019]. Specifically, we

+labeled X number of frames taken from X videos/animals (then X% was used for training (default is 95%). We used a